LLM Prompt Best Practices For Large Context Windows

by Natalia Kuzminykh , Associate Data Science Content Editor

Building a language-based AI system often involves handling extensive context windows, whether you’re processing entire documents or dealing with lengthy prompts. If this sounds familiar, you might be considering GPT-4 Turbo with its impressive 1M token window as the perfect solution. However, some studies indicate that bigger context windows may not always lead to better performance and sometimes it is worth considering other ways to create a reliable application.

In this article we’ll explore the side effects of large context windows, examining how they reshape the way we design prompts and the creative techniques needed to fully leverage this vast context space. We’ll also provide practical tips for improving the performance of your AI system using popular OpenAI and custom models developed with Hugging Face.

What is a large context window and how does it help?

When observing advancements in the AI field, it’s clear that large language model (LLM) context window sizes are increasing. For example, Anthropic’s model boasts a 100K token window, while GPT-4 and Llama offer 128K and 32K respectively. Google’s PaLM is available with an 8K window, and Cohere provides just over a 4K window. They dwarf those of only a year ago.

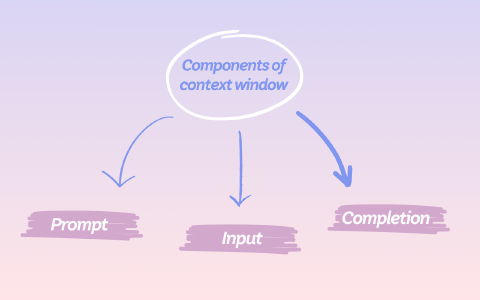

A context window refers to the amount of text the model can consider when generating a response. The size of these windows can vary across LLMs, affecting their ability to understand and process input data. A larger window would enable an LLM to process more extensive information, which is crucial for tasks requiring in-context learning.

Nevertheless, challenges are inherent in all natural language processing systems. Even the most advanced LLMs struggle to sustain their accuracy with lengthy texts.

One of the reasons for this is that LLMs typically perform tasks through prompting. All the necessary information and data is formatted as textual input and the model produces a text completion. These inputs can contain thousands of tokens, especially when processing extensive documents or when augmented with external information like search engine results or database queries. If you need a refresher on how those token counts are calculated in the first place, see our guide on calculating LLM token counts.

Another common challenge is the model’s difficulty in maintaining focus on detail, especially for information located in the middle of the text as the context window grows. This situation can result in a dilution of attention, where important nuances and specifics may be overlooked or given less importance in the model’s analysis and responses.

Addressing these scenarios requires developers to efficiently manage long sequences and introduce new techniques to maintain the overall performance of the AI system.

What are the issues associated with large context windows?

How a larger context window affects the prompt design

When you’re setting up a specific AI model for your business, the first thing you do is create or tweak a prompt. This step is key because the prompt is what the model uses to generate answers. It’s important to make sure the prompt is just right—not too long, not too short—so the AI can understand and respond in a way that makes sense and is useful.

To make the most of the model’s ability to handle information, it helps to summarise the essentials and highlight what’s most important. This way, you work within the model’s limits but still get the results you want.

Overall, the impact of context window size on LLM performance is multifaceted and affects several aspects:

- Coherence: A bigger window means the model can see more of the conversation at once, which helps it make more sense of the text. With a smaller window, it might miss earlier parts, leading to answers that repeat or contradict.

- Relevance: The ability to look back at more of the conversation enables the AI to give answers that fit better with what’s being discussed.

- Memory: If the context window is too small, the model might forget key details mentioned before. A larger window helps it remember and use these details.

- Computational resources: Looking at more text at once requires more computing power and memory, which can slow things down and cost more.

- Training and fine-tuning: The context window size sets the limit for how much text the model can learn from in one go.

- Long-form content generation: For creating longer text, a bigger window is better. It helps the model stay on topic throughout the piece.

In short, the size of the context window is really important because it influences how well the AI can understand, remember, and respond to text. This affects everything from making sense of the conversation to how much it costs to run the model.

Prompt best practices for large contexts

Here are a range of techniques for crafting powerful prompts for larger contexts.

Be clear in your instructions

LLMs can’t guess what you’re thinking. If you need concise answers, specify it. For more complex insights, ask for an expert opinion. Always clarify the format you prefer. The more accurately you describe your requirements, the better the model can serve you.

Strategies for better prompts:

- Provide detailed queries: Include essential details and context in your requests to receive more relevant responses. Vague instructions often lead to guesswork, reducing the accuracy of the output.

Worse: Tell me about neural networks

Better: Can you tell me how neural networks help recognize pictures? I'd like to know about the steps it takes and how it's used in things like identifying faces or objects in photos

- Use clear separators: For complex prompts, use symbols like triple quotes or section headers to divide your prompt into clear parts. This particularly helps with complicated tasks, making it easier for the model to understand exactly what you’re asking.

SYSTEM

You will be given a set of customer reviews for two different products, each set enclosed in curly braces. Your task is to first identify key points of praise or criticism for each product based on the reviews. Then, compare these key points to determine which product seems to provide better value for customers. Conclude with a brief recommendation.

USER

{review product A} insert reviews for product A here {/review product A}

{review product B} insert reviews for product B here {/review product B}

- Outline the steps: If your task can be broken down into steps, do so. Laying out a clear path can guide the model to better outcomes.

SYSTEM

You will receive a customer feedback message enclosed in square brackets. Follow these steps to address the feedback effectively.

Step 1 - Start by acknowledging receipt of the customer's feedback. Use a prefix that says "Acknowledgment: " and craft a sentence that expresses gratitude for the feedback provided.

Step 2 - Extract and summarize the main points or concerns raised by the customer in one sentence. Use a prefix that says "Key Points: ".

Step 3 - Based on the key points identified in Step 2, propose a solution or outline the next steps to address the customer's concerns. Start this with a prefix that says "Solution/Next Steps: ".

USER

"""insert customer feedback here"""

- Include examples: Sometimes, showing is better than telling. If you want the model to follow a specific style or format, providing an example can be more effective than a long explanation.

SYSTEM

Use metaphors to explain complex scientific principles in a way that connects with everyday experiences.

USER

Teach me about gravity.

ASSISTANT

Imagine the sun as a giant bowling ball placed on a trampoline. Earth and the other planets are like marbles moving around it. The weight of the bowling ball creates a dip in the trampoline, guiding the path of the marbles around it. This is how gravity works; the mass of objects curves space, directing the motion of objects around them.

USER

Teach me about photosynthesis.

<...>

- Specify output length: Letting the model know how long you want the response can be helpful. While specifying an exact word count might not always work perfectly, asking for a certain number of paragraphs or bullet points can guide the model to produce outputs of a desired length.

USER

Summarize the text delimited by triple quotes in about 50 words.

"""insert text here"""

Break down complex tasks

As with software development decomposition, that is breaking a complex task into smaller, more manageable pieces can improve accuracy and efficiency. This approach also helps in structuring a workflow where the output of one task feeds into the next.

Strategies for better prompts:

- Use intent classification: For tasks that need different instructions based on the scenario, first identify the type of request. This helps in providing the most relevant instructions for that particular case. You can even structure this process in stages, where each stage has specific instructions, reducing errors and potentially lowering costs.

- Summarize or filter long conversations: Since there’s a limit to how much text the model can consider at once, long dialogues might need summarizing or filtering to keep the conversation relevant and manageable.

- Piecewise document summarization: For summarizing long documents, break them into sections and summarize each one separately. Then, combine these summaries for an overall view. This recursive approach can also include a running summary to maintain context throughout the document.

Use external tools

Use other tools to enhance the model’s capabilities. For example, a text retrieval system can provide relevant information the model might not have, and a code execution engine can handle calculations or code execution tasks.

Strategies for better prompts:

- Use embeddings for efficient search: Embeddings can help find and dynamically incorporate relevant information into the model’s input. This is especially useful when the model needs up-to-date or specific information not included in its training data.

Strategies for sequential prompting to maintain narrative or argumentative coherence

To enhance narrative or argumentative coherence, it’s crucial to understand and leverage the way these models process information. Recent research highlights a U-shaped pattern in LLM performance, where information at the beginning and end of a context window is more reliably processed than information positioned in the middle.

This phenomenon mirrors the Serial Position Effect observed in human cognition, suggesting that LLMs, like humans, are better able to remember information presented at the start and close of a list or sequence.

Given this insight, strategic sequential prompting becomes a vital tool for maintaining coherence over lengthy narratives. Here’s how to implement effective strategies based on these findings:

- Leverage the Serial Position Effect: When constructing a narrative or developing an argument, ensure that your key points, questions, or summaries are introduced early on and reiterated or concluded towards the end. This positioning helps maintain coherence by ensuring that the model keeps the main themes or arguments in focus.

- Chronological arrangement: Arrange the content or historical interactions in chronological order. This not only helps human readers follow the progression of events or arguments but also aligns with the way some LLMs process information more effectively. For example, when discussing events, historical developments, or even a series of studies, presenting them in the order they occurred can help the model—and the reader—maintain a clear narrative thread.

- Query-aware contextualization: Placing key points both before and after significant chunks of text can act as bookends that reinforce the model’s focus on the relevant information. While this technique has shown near-perfect performance in tasks like synthetic key-value pairing, its application in broader contexts like multi-document question-answering or argumentative essays can help in subtly reminding the model of the core topics or questions at hand, thereby maintaining coherence across a larger context window.

- Segmentation and summarization: For very large contexts, breaking down the text into smaller segments and providing summaries at the beginning or end of each segment can help. This technique capitalizes on the model’s strengths at both ends of the context window and ensures that essential details are not lost. Summarizing key points before moving on to the next segment can serve as a cognitive and contextual anchor for the LLM, enhancing its ability to maintain narrative or argumentative coherence.

The role of attention mechanisms in handling larger contexts

A context window works as a strict barrier, which limits the scope of information a model can pull from when making predictions. On the other hand, attention is an element of many neural network architectures that allow models to focus on specific segments. In a Transformer-based model, attention is crucial in identifying and tracing connections and dependencies not only within a single sentence but also across multiple sentences.

Understanding the Interaction between the Context Window and Attention

Scope limitation: The context window sets the boundaries for attention. Regardless of the sophistication level of the attention mechanism, it’s unable to focus on tokens situated beyond the confines of the context window.

Selective focus: In the context window, attention helps the LLM to selectively focus on the most relevant sections of the given data, generating coherent and semantically relevant responses.

Sequential processing: When the length of the input exceeds the length of the context window, models may choose to process the text sequentially, drawing attention to different parts of the text as they appear in the window.

Influence on LLM Performance

Context constraints: If the necessary context is cut off the model may not be able to perform the task.

Efficiency: While attention improves a model’s efficiency within the context window, it is unable to compensate for the lack of information resulting from restrictions imposed by the window’s size.

Attention is indeed a powerful feature of LLMs, allowing them to process input in an adaptable and context-sensitive manner. However, it is important to remember that the context window clearly delimits the permissible periphery of consideration. As a result, skillful management of context window constraints is vital to fully utilize the attention mechanism in LLM.

Example LLMs with large context windows and their prompt formats

Prompt designs tend to differ extensively within distinct language models. The following examples illustrate how these design paradigms evolve across companies such as OpenAI, Anthropic, Mistral, Llama, and other open source models.

OpenAI

Models introduced by OpenAI, like GPT 3.5 Turbo and GPT-4, need instructions to be positioned at the onset of the prompt. They are often interrupted with a trio of hash signs “###” or quotation marks """ to distinguish between the instruction and the context.

Moreover, the latest models provide support for a function known as ChatCompletion messages, which separates the instruction and its associated context into a system message and a user message respectively.

Creating prompts that are detailed, clear, and concise is one of the guidelines of OpenAI. Therefore, you should ensure that your prompts adequately describe the desired context, anticipated outcome, length, format and style when tailoring an AI system for your use case.

For instance, consider this prompt example:

######

You are an expert summarizer.

Summarize the text below as a bullet point list of the most important points.

######

Text: {text input here}

A properly structured prompt of this nature assists the model with the task at hand, resulting in the generation of more precise responses.

Anthropic

Anthropic’s Claude model utilizes a dialogic structure for commands. The official documentation specifies that special tokens were employed during its training to indicate the speaker. The \n\nHuman: functions as a tool to pose questions or deliver directives, while the \n\nAssistant: token signals the AI’s replies. For instance:

\n\nHuman: {Your question or instruction here}

\n\nAssistant:

This operational structure is vital to keeping in line with how Claude has been trained. As a model, it has been designed to function best as an interactive, conversational agent and these special tokens are pivotal to its performance. Users who have deviated from this standardized format have noticed that the output could be unexpectedly irregular, or in a worst-case scenario, considerably undermined.

Mistral

Mistral 7b and Mixtral 8x7b use a prompt style similar to Llama’s, although with some modifications.The structure is as follows:

In the process of tweaking a chat application using Llama, the beginning of user input is indicated by [INST] and its finishes by [/INST]. There is no specific marker needed for the output of the model. The <s> tag signifies prior conversation pairs, while the <SYS> tag designates the model system message.

<s>[INST] Instruction [/INST] Model answer</s>[INST] Follow-up instruction [/INST]

Adherence to this format is critical as it must be consistent with the training process. If an alternative prompt structure is used, the model’s performance may not meet expectations, especially after repeated messaging in conversation-based applications.

Conclusions

In conclusion, the excitement around bigger context windows in LLM models is understandable, but as we saw in this article, bigger isn’t always better on its own. Successfully using large-context language models involves more than just choosing the one with the biggest window. It requires careful thought on how both to ask questions and organize information so that AI can use it effectively. Finding the right balance and being smart about how we use these tools is the key to making GPT-powered systems work better for us.

For organizations building LLM-powered products that need to handle large context windows reliably, our LLM consulting services provide hands-on guidance from prompt design through to production deployment.