Calculating LLM Token Counts: A Practical Guide

by Natalia Kuzminykh , Associate Data Science Content Editor

As we explored in depth in the first two parts of this series (one, two) LLMs such as GPT-4, LLaMA, or Gemini process language by breaking text into tokens, which are essentially sequences of integers representing various elements of language.

The number of tokens a model can process at a time, its context window, directly impacts how it comprehends, generates, and interacts with text. This limitation can affect the depth of analysis, the context retention, and the overall quality of output. For instance, in tasks involving extensive text analysis or sophisticated dialogue generation, reaching the token limit can mean losing crucial context or truncating important information. Teams building production systems often work with our AI consultants to design around these constraints rather than discovering them in production.

This article aims to demystify the concept of token counts in LLMs, detailing how they influence model performance and interaction. Starting with the basics, we’ll define what a token is and how they’re used for text processing. The discussion then moves to the tokenization process, examining different models and the tools used for token counting. We’ll also look at both manual and automated methods for calculating tokens, highlighting their roles in larger datasets.

Concluding with best practices, we’ll offer insights into efficient token management, multi-language tokenization challenges, and strategies for minimizing token counts without losing content quality. This guide aims to equip readers with a thorough understanding of token counts, enhancing their use and application in LLMs.

Understanding Tokens in LLMs

Let’s pause for a moment to briefly explore the core concept of a token before we proceed any further.

Definition of a token in the context of language models

Tokens can be described as fragments of language – this can mean whole words, parts of words, or even punctuation marks. This fragmentation is a critical first step in language model processing.

For example, in English, a token typically represents about 4 characters or roughly three-quarters of a word. Keep in mind that tokens aren’t uniformly sized or consistent across different languages.

In some cases, a single complex word might be broken down into multiple tokens, or conversely, short words and a following space might be combined into a single token. This flexibility in tokenization allows models to efficiently process a wide variety of text structures.

The variability is more pronounced when considering different languages. For example, languages like German, which often combine words, or languages with non-Latin scripts like Chinese, present unique challenges in tokenization.

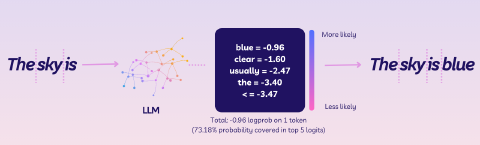

How LLMs use tokens to process and generate text

The primary goal of an LLM isn’t just to predict or generate the next token in a sequence, but to create a rich, multi-dimensional space where each token finds its place based on meaning and context. This process is akin to mapping the stars in the sky – each word is a point of light, and the model’s job is to understand how these points relate to each other.

During its training phase, an LLM is fed a massive variety of text – each offering unique combinations of tokens. From technical manuals to poetic verses, the AI model learns not just the overt meaning of each token but also the subtle nuances of their use in different contexts.

Over time, the LLM begins to recognize patterns. For instance, consider how an LLM learns to handle tokens associated with the concept of time.

With a basic tokenizer, ’time’ and times’ would be treated as distinct tokens due to the added ’s':

- ’time’ - a singular noun referring to the concept of duration or a specific moment vs.

- ’times’ - either the plural form or a verb form, as in ‘he times his runs’

But, after exposure to more advanced tokenization techniques, such as subword tokenization, the model would learn that ’times’ might be tokenized into something like [“time”, “s”], recognizing the root word “time” and the plural suffix “s” as separate components.

This approach can help the model understand the relationship between these two words better, acknowledging that they share a common root but differ in number or grammatical function.

This versatility in understanding is crucial for the LLM to generate responses that aren’t just grammatically correct but also contextually nuanced. The model continuously refines its understanding of token relationships, constantly evolving its capacity to interpret and generate more sophisticated text.

The cost of tokens – their value in the LLM ’economy'

In terms of the economy of LLMs, tokens can be thought of as a currency.

Each token that the model processes requires computational resources – memory, processing power, and time. Thus, the more tokens a model has to process, the greater the computational cost. This is particularly relevant when dealing with extensive texts or intricate linguistic structures where the number of tokens can rapidly increase.

The balance of the token economy becomes a crucial aspect of model design and application. Developers must consider the trade-off between the depth of analysis and the computational resources available.

In practical terms, this often involves optimizing the prompt to ensure that the most relevant information is processed within the token limit. For users of LLMs, understanding this token economy is key to effectively utilizing these models. By tailoring inputs to be concise yet informative, users can maximize the output quality without overburdening the model’s processing capacity. This kind of prompt and architecture tuning sits at the heart of our LLM consulting and development work, where small changes in token strategy compound into significant cost and latency savings.

Tokenization Process

Having looked at how tokens function in generative AI models, let’s transition to the practical aspect and explore how to implement them in your applications.

Tokenizing unstructured text into vectors is essential for any AI pipeline. Starting with an input, such as a sentence or a document, the process breaks it down into manageable units. For example, the tokenized sentence “The evil that men do lives after them” would become: | 791 | 14289 | 430 | 3026 | 656 | 6439 | 1306 | 1124 |.

As you can see, during encoding, each token is assigned a unique numerical identifier. This step is important because AI models, especially neural networks, interpret and process numerical data rather than direct text. The mapping typically involves a predefined vocabulary, assigning each unique token a specific integer.

The role of tokenizers in different language models

Overall, tokenization varies in granularity, from sentences to words or subwords, based on the requirements of a given task or a language model. Transformer-based models, for instance, commonly use WordPiece or Byte Pair Encoding (BPE) algorithms.

WordPiece Tokenization: Used by models like BERT, WordPiece starts with a base vocabulary of individual characters and progressively merges the most frequent combinations of tokens to form new, larger tokens.

This approach is beneficial for handling words not in the initial vocabulary, as it breaks them down into recognizable sub-tokens.

WordPiece is adept at dealing with a mix of common words and rare or technical terms, ensuring more efficient and effective processing by the model.

Byte Pair Encoding (BPE): Employed by models such as ChatGPT, BPE is a subword tokenizer that iteratively replaces the most frequent pairs of bytes or characters in a text with a single new token.

Starting with the basic characters of the training text, BPE continuously merges the most common pairs, adding them as new units to the vocabulary. This process is repeated until a desired vocabulary size is reached.

The advantage of BPE lies in its ability to handle out-of-vocabulary (OOV) words by breaking them into smaller, known subwords, making it particularly useful for languages with complex morphology or large vocabularies. For example, a BPE encoder would split the word ’encoding’ into ’encod’ and ‘ing’ units. Given that ‘ing’ is a common suffix in English, frequently appearing across various texts, this segmentation aids in enhancing the model’s capacity for generalization and in deepening its comprehension of grammatical structures.

Other popular methods include the SentencePiece method useful in multilingual settings and Character-Based Tokenization which is widely used in Chinese-based language models.

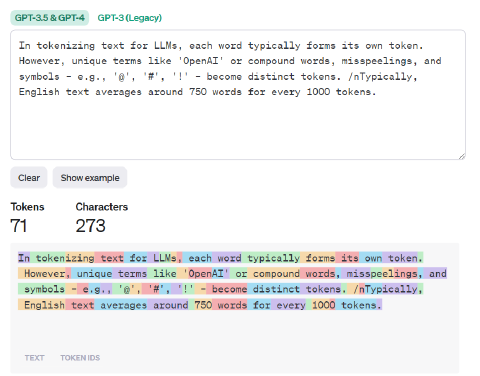

Overview of tools available for counting tokens

Splitting text into tokens becomes indispensable in complex applications like Retrieval Augmented Generation. This process involves querying a corpus of documents to find relevant content and then integrating this context into a prompt. It is the same primitive that underpins our AI document processing solutions, where token budgets directly constrain how much source material an LLM can reason over per request.

The challenge lies in including as much pertinent context as possible without exceeding the token limit. To address this, LLMs come equipped with native functions or methods enabling token counting within the model’s processing pipeline.This integration is invaluable, as it aligns the token count with the model’s specific tokenization algorithm, yielding the most accurate count for that model.

For OpenAI, such a library is [tiktoken](https://pypi.org/project/tiktoken/0.1.1/). It’s an open-source BPE tokenizer, which offers support for three specific encodings used by OpenAI models.

cl100k_base encoding: This tokenizer is utilized with gpt-4, gpt-3.5-turbo and text-embedding-ada-002 models, which are widely used in applications like ChatGPT. The cl100k_base encoding is designed to align with the advanced capabilities of these models, particularly in handling complex language structures and diverse text embeddings.

p50k_base (and p50k_edit) encoding: Models such as the Codex series, text-davinci-002, and text-davinci-003 leverage this encoding. The p50k_base encoding is tailored to the specificities of these models, which are often used for more sophisticated text generation and coding-related tasks.

r50k_base (or gpt2) encoding: This is employed by GPT-3 models like text-davinci-003. The r50k_base or gpt2 encoding is particularly matched to the needs of these models, balancing between the requirements of complex language understanding and generation.

For open-source LLMs, the SentenceTransformers library can be a valuable resource, providing diverse WordPiece-based tokenization methods for Transformer models. This tool is especially useful for teams requiring flexibility in handling various language processing tasks.

Another robust tool for text tokenization is the AutoTokenizer class from the transformers library. This class significantly simplifies the process of preparing data for use with sophisticated models such as Mixtral 8x 7B. One achieves this by inputting the name of a pre-trained model as a string into the from_pretrained() method and then assigning the output to a variable (AutoTokenizer.from_pretrained('mistralai/Mistral-8x7B'))

Step-by-step guide to tokenizing text

Now, let’s try to handle tokens in our application with a few lines of code.

Step 1: Installing the Necessary Libraries

Before starting working on simple text tokenization ensure that you have all dependencies installed on your local machine.

%pip install --upgrade tiktoken

%pip install --upgrade openai

Step 2: Preparing Your Text

You should also have some text you want to work with. This can be a string variable or text read from a file.

text = "OpenAI is a private research laboratory. It aims to develop and direct artificial intelligence (AI) in ways that benefit humanity as a whole."

Step 3: Loading an Encoding

Use the function tiktoken.get_encoding() to load an encoding by its name. Note that the first execution of this function will require an internet connection for downloading purposes.

encoding = tiktoken.get_encoding('cl100k_base')

Don’t forget to use tiktoken.encoding_for_model() to match your tokenizer with the specific OpenAI model you’re using. Alternatively, consider utilizing the Claude Tokenizer for efficient token counting in applications based on the Anthropic framework.

encoding = tiktoken.encoding_for_model("gpt-3.5-turbo")

Step 4: Turning text into tokens with encoding.encode()

Here’s where the magic happens! Use .encode() to turn your text into a series of numbers – these are your tokens.

sentences = encoding.encode(text)

print("Tokenized text:", sentences)

# Output

# Sentences: [5109, 15836, 374, 264, 879, 3495, 27692, 13, 1102, 22262, 311, 2274, 323, 2167, 21075, 11478, 320, 15836, 8, 304, 5627, 430, 8935, 22706, 439, 264, 4459, 13]

To count how many tokens you’ve got, you should just run:

def count_tokens(string: str, encoding_name: str) -> int:

"""Returns the number of tokens in a text string."""

encoding = tiktoken.get_encoding(encoding_name)

num_tokens = len(encoding.encode(string))

return num_tokens

count_tokens(text, "cl100k_base")

# Output

# 28

Step 5: Turn tokens into text with encoding.decode()

With the .decode() method, you can convert a list of vectors back into a coherent string.

encoding = tiktoken.encoding_for_model("gpt-3.5-turbo")

encoding.decode([5109, 15836, 374, 264, 879, 3495, 27692, 13, 1102, 22262, 311, 2274, 323, 2167, 21075, 11478, 320, 15836, 8, 304, 5627, 430, 8935, 22706, 439, 264, 4459, 13])

# Output

# OpenAI is a private research laboratory. It aims to develop and direct artificial intelligence (AI) in ways that benefit humanity as a whole.

But be careful with individual tokens –- .decode() might get a bit tricky there: it may result in a lossy conversion for tokens that don’t align with utf-8 boundaries.

For such cases, .decode_single_token_bytes() would be a safe technique to convert a single vector back into the bytes it represents.

encoding = tiktoken.encoding_for_model("gpt-3.5-turbo")

[encoding.decode_single_token_bytes(token) for token in [5109, 15836, 374, 264, 879, 3495, 27692, 13, 1102, 22262, 311, 2274, 323, 2167, 21075, 11478, 320, 15836, 8, 304, 5627, 430, 8935, 22706, 439, 264, 4459, 13]]

# Output

'''

b'Open',

b'AI',

b' is',

b' a',

b' private',

b' research',

b' laboratory',

b'.',

b' It',

b' aims',

b' to',

b' develop',

b' and',

b' direct',

b' artificial',

b' intelligence',

b' (',

b'AI',

b')',

b' in',

b' ways',

b' that',

b' benefit',

b' humanity',

b' as',

b' a',

b' whole',

b'.'

'''

Step 6: Comparing encodings (Optional)

Curious how different encodings work? Let’s integrate what we’ve learned to draw a line between them and see the differences closely.

def compare_encodings(example_string: str) -> None:

"""Prints a comparison of three string encodings."""

print(f'\nExample string: "{example_string}"')

# for each encoding, print the # of tokens, the token integers, and the token bytes

for encoding_name in ["r50k_base", "p50k_base", "cl100k_base"]:

encoding = tiktoken.get_encoding(encoding_name)

token_integers = encoding.encode(example_string)

num_tokens = len(token_integers)

token_bytes = [encoding.decode_single_token_bytes(token) for token in token_integers]

print()

print(f"{encoding_name} encoding: {num_tokens} tokens")

print(f"token integers: {token_integers}")

print(f"token bytes: {token_bytes}")

compare_encodings("antidisestablishmentarianism")

Now, if we call it for the word like “antidisestablishmentarianism”, we get the following results:

Example string: "antidisestablishmentarianism"

r50k_base encoding: 5 tokens

token integers: [415, 29207, 44390, 3699, 1042]

token bytes: [b'ant', b'idis', b'establishment', b'arian', b'ism']

p50k_base encoding: 5 tokens

token integers: [415, 29207, 44390, 3699, 1042]

token bytes: [b'ant', b'idis', b'establishment', b'arian', b'ism']

cl100k_base encoding: 6 tokens

token integers: [519, 85342, 34500, 479, 8997, 2191]

token bytes: [b'ant', b'idis', b'establish', b'ment', b'arian', b'ism']

Step 7: Counting tokens for chat completions API calls (Optional)

Models like GPT-3.5-Turbo and GPT-4, while utilizing tokens similarly to their predecessors in completions, present a unique challenge in token count estimation due to their message-based structure. The next example is designed to solve this challenge for messages passed to gpt-3.5-turbo or gpt-4.

It’s crucial to note that requests incorporating optional functions will likely use additional tokens beyond these calculated estimates.

def num_tokens_from_messages(messages, model="gpt-3.5-turbo-0613"):

"""Return the number of tokens used by a list of messages."""

try:

encoding = tiktoken.encoding_for_model(model)

except KeyError:

print("Warning: model not found. Using cl100k_base encoding.")

encoding = tiktoken.get_encoding("cl100k_base")

if model in {"gpt-4", "gpt-3.5-turbo", "gpt-4-1106-preview", "gpt-4-32k-0613"}:

tokens_per_message = 3

tokens_per_name = 1

elif model == "gpt-3.5-turbo-0301":

tokens_per_message = 4 # every message follows <|start|>{role/name}\n{content}<|end|>\n

tokens_per_name = -1 # if there's a name, the role is omitted

else:

raise NotImplementedError(

f"""num_tokens_from_messages() is not implemented for model {model}.""")

num_tokens = 0

for message in messages:

num_tokens += tokens_per_message

for key, value in message.items():

num_tokens += len(encoding.encode(value))

if key == "name":

num_tokens += tokens_per_name

num_tokens += 3 # every reply is primed with <|start|>assistant<|message|>

return num_tokens

Let’s now verify if the function above aligns with the responses from the OpenAI API.

import openai

import tiktoken

openai.api_key = "xxxxxxx"

example_messages = [

{

"role": "system",

"content": "You are a skilled assistant adept at translating complex corporate language into straightforward English.",

},

{

"role": "system",

"name": "example_user",

"content": "Integrating new synergies will significantly enhance our revenue growth.",

},

{

"role": "system",

"name": "example_assistant",

"content": "Combining different elements effectively will lead to an increase in our earnings.",

},

{

"role": "system",

"name": "example_user",

"content": "We should reconvene when we have additional capacity to explore further avenues for maximizing our impact.",

},

{

"role": "system",

"name": "example_assistant",

"content": "Let's discuss this again when we have more resources to consider ways to improve our outcomes.",

},

{

"role": "user",

"content": "Due to this unexpected change in direction, we lack the time to extensively overhaul the project for the client.",

}]

for model in ["gpt-3.5-turbo-0301", "gpt-3.5-turbo", "gpt-4-1106-preview", "gpt-4"]:

print(model)

# example token count from the function defined above

print(f"{num_tokens_from_messages(example_messages, model)} prompt tokens counted by num_tokens_from_messages().")

# example token count from the OpenAI API

response = openai.ChatCompletion.create(

model=model, messages=example_messages,

temperature=0, max_tokens=1, # we're only counting input tokens here

)

print(f'{response["usage"]["prompt_tokens"]} prompt tokens counted by the OpenAI API.')

print()

# Output

'''

gpt-3.5-turbo-0301

139 prompt tokens counted by num_tokens_from_messages().

139 prompt tokens counted by the OpenAI API.

gpt-3.5-turbo

141 prompt tokens counted by num_tokens_from_messages().

141 prompt tokens counted by the OpenAI API.

gpt-4-1106-preview

141 prompt tokens counted by num_tokens_from_messages().

141 prompt tokens counted by the OpenAI API.

gpt-4

141 prompt tokens counted by num_tokens_from_messages().

141 prompt tokens counted by the OpenAI API.

'''

Conclusion

As we wrap up this guide on token counts, it’s clear that understanding tokens is truly important for anyone working with these large language models. Tokens might seem a bit technical at first, but they’re actually key to how these AI systems understand and create language. In the next part of this series, we go deeper into this topic and see how to use LLMs with a larger context window in your application.

If you are building production applications that need to manage token budgets effectively, or are looking for expert guidance on optimizing your LLM architecture, explore our LLM consulting and development services. For broader strategy work, including model selection, agent design, and cost optimisation across your AI stack, see our AI consulting and development services and AI agent development offerings.

Frequently asked questions

A token is a fragment of a word that is represented by a unique integer. Tokens can be words, parts of words, or punctuation marks.

The tokenization method can significantly impact performance, so developers will try a wide range of tactics to eek out that last percent. But this largely depends on the source text. If an LLM is trained mostly on web-scraped data, then the tokenizers might be optimized for simpler language.

Typically yes, since there is redundancy in language. But this is unrelated to the act of tokenization. See this article.

Punctuation is tokenized, since it affects the meaning of the sentence.

WordPiece often results in smaller token counts, but sometimes struggles with out of dictionary words.

This depends on the underlying tokenizer. If the tokenizer is working on a byte-level, then it is more likely to continue working with out-of-dictionary words.

Yes. In German, for example, words are often excessively combined. Numbers are a good example. In German

neunzehnhundertvierundachtzigtakes about 11 tokens. Compared to just five fornineteen eighty four.