Buildpacks - The Ultimate Machine Learning Container

by Enrico Rotundo , Associate Data Scientist

Winder.AI worked with Grid.AI (now Lightning AI) to investigate how Buildpacks can minimize the number of base containers required to run a modern platform. A summary of this work includes:

- Researching Buildpack best practices and adapting to modern machine learning workloads

- Reduce user burden and reduce maintenance costs by developing Buildpacks ready for production use

- Reporting and training on how Buildpacks can be leveraged in the future

The video below presents this work.

The Problem: How to Manage The Explosion of Base Containers

All machine learning (ML) platforms have one goal in common: relieving users from unnecessary burdens when doing their work. In practical terms that means letting users focus on their ML code, while the platform takes care of the repeatable tasks. Users submit their work to a remote platform by packaging training code, dependency files, configurations and so on.

Packaging project requirements like custom libraries, system level bundles, hardware and security specifications is complex. The net result is ML practitioners struggle with the complexity, which reduces efficiency.

The most traditional way to solve this problem is to bundle ML code and all dependencies into a tarball file. This ad-hoc process is hard to maintain, because it is hard to define a clear interface, and prone to error.

Another approach is to ask the user for a Dockerfile or container. The problem with this is, while it makes the process reproducible, writing a proper Dockerfile requires specific Linux and Docker knowledge that spans way beyond the scope of ML. Therefore MLOps platform teams try to share containers, like when a platform team provides approved machine learning containers that others can build off.

However, cloud ML environments have different specifications (e.g. CPU, GPU, TPU, etc.). Security teams mandate regular patching and updates. High level libraries may require os-level libraries (e.g. PIL) that you don’t want to include in other distributions. Conda vs Pip. Private repositories. Training vs. inference. And you might even have different “stacks”, like the Jupyter series of containers. The list is ever growing and in a large organization this can lead to tens to hundreds of individual ML Dockerfiles.

This multiplicity forces you to write dozens of ad-hoc Dockerfiles and before long it becomes hard to maintain any level of reliability. One notable recent example is the introduction of Apple M1 hardware, which took time to fix through the ML stack. If you were working on an MLOps platform team at this time you would have had to create a whole range of new Dockerfiles just for M1 architectures, which made it highly likely that it diverged from Dockerfiles destined for the cloud.

What are Buildpacks?

Buildpacks are a packaging engine that transforms your source-code into an image with zero or minimal user input. The output is a standard Open Container Initiative (OCI) image which means it can run on any cloud. Simply, code in, Docker image out. That easy. Promised!

Before we look at what makes all this possible, allow me to clarify something first. The term Buildpacks refers to the Cloud Native Buildpacks project (https://Buildpacks.io/), whilst Buildpack(s) refers to a software component responsible for building an application. More on the latter in just a moment.

A Buildpack is nothing else than a set of scripts supplied with metadata. But before I describe how Buildpacks are employed in a build, I would like to spend a few words discussing the environment.

Build and Run Images

A build involves two containerized environments corresponding to two different (but related) images that work together. The build-image is where build scripts (i.e. Buildpacks) are effectively run.

This image needs all package managers (e.g. apt-get, pip, conda) pre-installed, as well as proper access to any private package or data repositories if need to be.

The run-image provides the environment in which applications are launched at run-time. All build artifacts (e.g. compiled app) are auto-magically carried over from the build-image to the run-image by Buildpacks. This is similar to Docker’s multi-stage builds, except it works with all container formats. The benefit is that the runtime environment can be made much smaller and more secure.

Stack

The pair run & build images goes under the name of Stack. A stack is a relatively low-level entity in the sense that in common uses cases the end user would not be concerned with it. However it is good to know that this is where the base-image is set. Want to use Ubuntu Bionic or Alpine Linux as base? Here’s where you need to look at.

Below is an example of a stack from the official docs. It is simply a Dockerfile, nothing more complicated than a docker build and voila! Note how the build-image extends the run-image by installing git, wget, jq via apt-get.

# 1. Set a common base

FROM ubuntu:bionic as base

# 2. Set required CNB information

ENV CNB_USER_ID=1000

ENV CNB_GROUP_ID=1000

ENV CNB_STACK_ID="io.Buildpacks.samples.stacks.bionic"

LABEL io.Buildpacks.stack.id="io.Buildpacks.samples.stacks.bionic"

# 3. Create the user

RUN groupadd cnb --gid ${CNB_GROUP_ID} && \

useradd --uid ${CNB_USER_ID} --gid ${CNB_GROUP_ID} -m -s /bin/bash cnb

# 4. Install common packages

RUN apt-get update && \

apt-get install -y xz-utils ca-certificates && \

rm -rf /var/lib/apt/lists/*

# 5. Start a new run stage

FROM base as run

# 6. Set user and group (as declared in base image)

USER ${CNB_USER_ID}:${CNB_GROUP_ID}

# 7. Start a new build stage

FROM base as build

# 8. Install packages that we want to make available at build time

RUN apt-get update && \

apt-get install -y git wget jq && \

rm -rf /var/lib/apt/lists/* && \

wget https://github.com/sclevine/yj/releases/download/v5.0.0/yj-linux -O /usr/local/bin/yj && \

chmod +x /usr/local/bin/yj

# 9. Set user and group (as declared in base image)

USER ${CNB_USER_ID}:${CNB_GROUP_ID}

Builder

After the creation of a custom stack, there is one more component I need to introduce before I dive in Buildpacks’ specifics. This is the component were a stack and a set of Buildpacks come together forming a Builder, that “allows you to control what Buildpacks are used and what image apps are based on.”

A builder is defined in a builder.toml file, see the example below. First, it points a stack id and the related image names using the [repository]:[tag] format. Second, it references to an arbitrary number of Buildpacks to include in the builder. Third, specifies a couple of Buildpack groups.

Here’s where things get interesting. “A Buildpack group is a list of Buildpack entries defined in the order in which they will run.” Yes, you can contain multiple groups, and what the lifecycle (more on lifecycle in the next section) will do is selecting the first Buildpack group by order where all Buildpacks in that group pass the detection checks. With this mechanism you can highly reuse single Buildpacks and compose builders that address a variety of scenarios!

# Stack that will be used by the builder

[stack]

id = "io.Buildpacks.samples.stacks.bionic"

build-image = "cnbs/sample-stack-build:bionic"

run-image = "cnbs/sample-stack-run:bionic"

# Buildpacks to include in builder

[[Buildpacks]]

uri = "samples/Buildpacks/pip"

[[Buildpacks]]

uri = "samples/Buildpacks/conda"

[[Buildpacks]]

uri = "samples/Buildpacks/Poetry"

# Order used for detection

[[order]]

[[order.group]]

id = "samples/pip"

version = "0.0.1"

[[order.group]]

id = "samples/conda"

version = "0.0.1"

# Alternative order, if first one does not pass

[[order]]

[[order.group]]

id = "samples/pip"

version = "0.0.1"

[[order.group]]

id = "samples/Poetry"

version = "0.0.1"

So far I have explained how the base images are defined, and how these are combined with Buildpacks to create meaningful build sequences. In a way all that is foreword.

In fact, in the vast majority of use cases you do not need to create your own builder because there are off-the-shelf ones ready for use. For instance, Paketo maintains a well documented Python builder shopping with Buildpacks covering major package managers such as Pip, Pipenv, Miniconda and Poetry.

But imagine including business-specific Buildpacks in your builder, like a security Buildpack, or a unified tagging Buildpack. In that case you’d only need to maintain a single Buildpack and just reference it from other builders. It’s a bit like having company-specific helm charts.

Buildpack

Finally, it is time to talk about that fundamental component named Buildpack. Compared to stack and builder, it is more likely you will run into a Buildpack source code to learn or alter its functionality.

What is it then? “A Buildpack is a set of executables that inspects your app source code and creates a plan to build and run your application.” This is achieved via two scripts and a metadata file.

While the latter acts as a manifest file, the two scripts implement: 1) a detection check (i.e. bin/detect), for instance, a Pip Buildpack might look for a requirements.txt, while a Conda Buildpack would look for an environment.yml; 2) a build phase (i.e. bin/build) actually builds the application, set environment variables or add app dependencies. For example, a Pip Buildpack might do so by running pip install -r requirements.txt.

In fact, a Buildpack can do virtually anything that does not require root permissions. It can use a configuration file to set up the image’s entry point. It could run a pre-build script with custom code, and so on.

For example, you could create a Buildpack that checks for a business-tags.yaml file and sets the labels on the container. Or a hardware.txt file that installs AWS CUDA libraries if the file says “aws” or Coral TPU dependencies if the file says “coral”. No more multiple Dockerfiles!

Lifecycle

A lifecycle is an orchestrated execution of Buildpacks to compose the final app image. A very useful tool to launch a lifecycle on your host is the pack client; at the end of this section I list more DevOps oriented tools capable of integrating with continuous integration systems and Kubernetes. As introduced previously, a lifecycle is in charge of putting pieces together. This takes place when we launch pack build sample-app --path samples/apps/java-maven --builder cnbs/sample-builder:bionic. Let’s look at each stage in sequential order.

- Analyze

Validates registry access for each image used in the build stage. This stops the build early in the process if registry access is denied. Without getting lost in lengthy technicalities, I would just mention that in previous versions this phase was in charge of cache validation, but recently this responsibility has been moved downstream.

- Detect

This is a very important stage. Each Buildpack comes with a detection script that simply checks if that Buildpack is suitable for the app in question. For example, a Pip Buildpack may look for a requirements.txt file. If that is the case, it simply exits with 0 code so that Buildpacks will add it to a valid candidate set.

Depending on the set of Buildpacks that passed the detection, Buildpacks works out the group of Buildpacks that satisfy the order group specified in the builder.toml configuration. For clarity, I report below the example I am referring to. Buildpacks “looks for the first group that passes the detection process. If all of the groups fail, the detection process fails.”

# Order used for detection

[[order]]

[[order.group]]

id = "samples/pip"

version = "0.0.1"

[[order.group]]

id = "samples/conda"

version = "0.0.1"

# Alternative order, if first one does not pass

[[order]]

[[order.group]]

id = "samples/pip"

version = "0.0.1"

[[order.group]]

id = "samples/Poetry"

version = "0.0.1"

- Restore

This stage restores layers from the cache to optimize the building process. Layers are created in the build phase, keep on reading the next section to learn more about this.

- Build

As stated previously, each Buildpack comes with a build script containing the logic that turns source code into runnable artifacts. When the lifecycle reaches this stage some Buildpacks have been triggered, thus Buildpacks can launch their respective build scripts.

Every build script writes out to a dedicated file system location that corresponds to a Docker layer. That means the result of a build process is a set of independent layers that can be cached and reused.

- Export

The very last phase assembles the final OCI image (see figure below) by moving the source Buildpacks’ layers (BP-provided layer) created in the previous stage from the build-image to the run-image. At this stage also the source code (app) is moved to the final image and a proper entrypoint is set up.

Integrations

To interact with Buildpacks I have mentioned above the pack tool that works perfectly on a single host. However in real-life deployments you want to use more a sophisticated toolkit capable of automating build pipelines as well as offloading that burden to remote resources. Luckily, the Buildpacks project comes with a number of open-source integrations.

Orb is an integration for CirceCI that allows users to run pack commands inside their pipelines. Furthermore, GitLab Auto DevOps ships with support for Buildpacks. Jenkins also can do Buildpacks via a step called cnbBuild that allows you to execute a build in a Jenkins pipeline.

What about Kubernetes? kpack comes in handy with a declarative builder resource. It can trigger automatic rebuilds upon app source changes, or Buildpack version updates. Very useful!

Last but not least, there is also a helpful task for Tekton to build and optionally publish a runnable image.

Benchmark with Docker

I should mention Buildpack build times are not blazing fast compared to pure Docker, because of the extra detection overhead. Let’s keep in mind that while you must invest time and effort into manually crafting a well-optimized Dockerfile, Buildpacks are fully automated and adds tons of flexibility. It knows how to install virtually any dependency and outputs a tiny app image with no user input!

To investigate this further, I have compared the build times of Docker and Buildpacks over a sample Python project. This is not an exhaustive comparison because it does not take into account the flexibility gap between the two systems. The objective is simply gauging the difference in build performance and measuring resource usage.

In terms of dependencies, the benchmarking application requires OpenCV (a popular computer vision library) via apt-get and its Python wrapper via Pip. I also install Pandas (a data analysis package) via Pip.

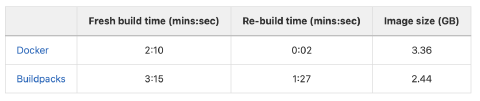

I use a virtual machine with 4 vCPUs and 16GB of memory and run a timed build from scratch as well as a re-build. The table below reports the resulting build times.

Buildpacks are slower to generate a fresh build, because running a complete Lifecycle (see above) is a much more complex process than just building a Dockerfile.

Buildpacks’ re-build times are significantly slower. This is because while Docker can directly fetch the prior build, Buildpacks still need to run all the build steps (analyze, detect, restore, build, export). Each step uses caching but that still results in some extra running time.

It is easy to minimize the size of containers built by Buildpacks because the “work” is distinct. An optimization in one Buildpack improves the size of all resulting containers. You could obtain similar image sizes with multi-stage Docker builds but you would have to manually optimize that for every Dockerfile you are responsible for.

Finally, remember that this is for building the containers. The resulting containers execute in exactly the same way, so there is no difference between traditional or Buildpack-built containers.

Build resource usage

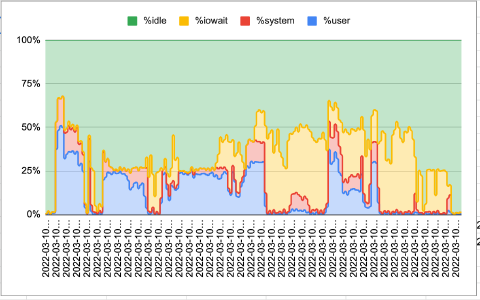

Let’s take a quick look at the resource consumption for a full build process. The build setup is the same as the one used in the section above. The plot below depicts the CPU usage throughout the build. What it highlights is that the task is rather IO bound, at least for the second half. What Buildpacks does during that time is mainly combining the image layers created during the “build” phase and exporting them into the final run-image.

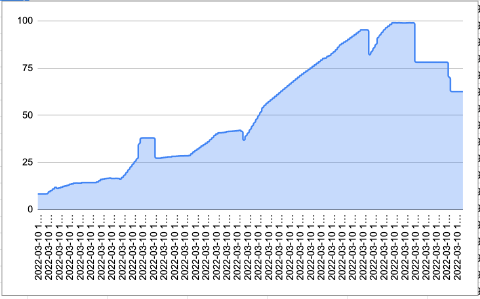

This process is also quite memory intensive. The plot below shows the percentage of memory usage during the build process. All of the 16GB of RAM are being employed at some point during the build.

Breaking down the resource use of Buildpacks is a whole topic on its own and we may write more about that in the future. What is relevant for the scope of this article is that while you may want to employ a machine with a generous amount of memory to speed up your builds, using a large multi-core host will not make a great difference.

Summary

Buildpacks are a very interesting proposition for teams that are responsible for curating base containers for their company. Traditional contains are often maintained independently which can balloon into a maintenance nightmare.

Buildpacks simplify this process by adding an extra layer of logic to define how a build executes, depending on the context. Platform teams can take advantage of this by exposing build logic through a simple file-based API that is compatible with nearly all modern tooling.

The result, for MLOps platform teams in particular, is more flexibility and lower maintenance costs.

Contact

Winder.AI performed this project to help Grid.AI (now Lightning AI) to improve their service and minimize their maintenance costs. However, our engineers at Winder.AI are ready and waiting to help you improve your service by integrating with your MLOps platform team.

If this work is of interest to your organization, then we’d love to talk to you. Please get in touch with the sales team at Winder.AI and we can chat about how we can help you.