Big Data in LLMs with Retrieval-Augmented Generation (RAG)

by Natalia Kuzminykh , Associate Data Science Content Editor

The motivation behind Retrieval-Augmented Generation (RAG) lies in a fundamental challenge faced by LLMs — their limited access to relevant data. Language models are trained on large datasets. But they still don’t cover details such as the latest events or up-to-date personal information, which can be crucial for answering specific queries.

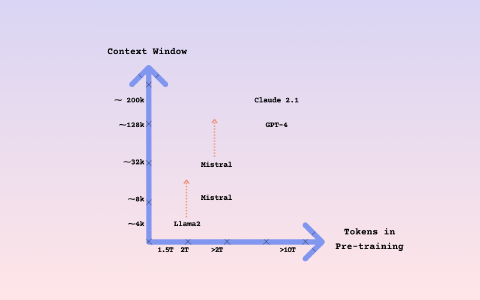

Larger context windows — which we have covered extensively in this series — allows LLMs to process thousands of tokens and work with more complex texts. LLMs have evolved from being able to comprehend only a few pages, to being able to understand entire books.

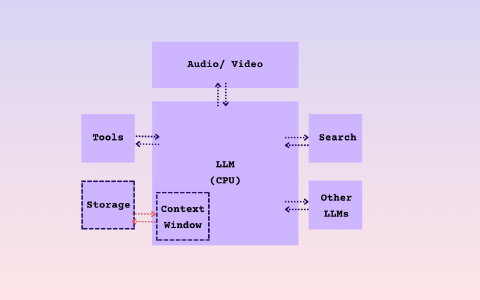

As a result, this capacity places LLMs at the heart of a new computational paradigm, similar to the core of an operating system, where accessing external data is a crucial feature. Retrieval-Augmented Generation addresses this with three main steps:

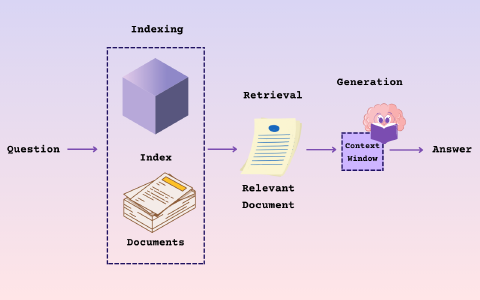

- Indexing: Organizing information from external sources so it can be quickly accessed

- Retrieval: Finding the specific pieces of information needed for a given query

- Generation: Combining the found information with the model’s knowledge, to generate accurate and relevant responses

How RAG Works

Indexing

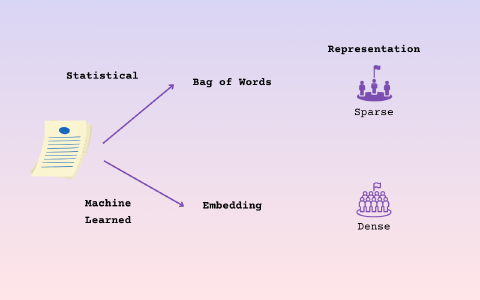

This first stage includes feeding external documents into a retrieval system in order to prepare them for user queries. To establish a relationship or relevance between documents, the engine uses some form of numerical representation of the content — vectors.

Why vectors? Imagine you’re in a library filled with books, articles and documents. Your task is to find information that’s similar or related, but all you can do is read through each page. Sounds daunting. That’s exactly the challenge with analyzing text in its raw form — it’s complicated and time-consuming.

What if, instead of words, each book could be summarized by a unique set of numbers? Comparing numbers is much simpler and faster than comparing sentences and paragraphs. This is the reason why we use numerical data: it helps to simplify the complex process of text comparison and speed up the process.

Various techniques have been created to analyze a document by counting the frequency of specific words, then creating a ‘sparse vector’ that represents a large set of possible words. The location of the vector indicates the frequency of each word in the document. The vector is ‘sparse’ because there are many zeros, as each word has a unique frequency in the document. This numerical representation is very large compared with the actual content, and there are effective search methods based on this type of data.

Recent advancements in embeddings have greatly enhanced the precision of RAG. Unlike earlier methods, modern embedding models can process extensive ranges of text, from 512 to over 8,000 tokens. They are not unlimited in size, so documents are broken down into vectors. This vector captures the main meaning of the document, and the vectors are then indexed. Questions can be embedded in the same space and compared numerically to find relevant documents based on the query.

Retrieval

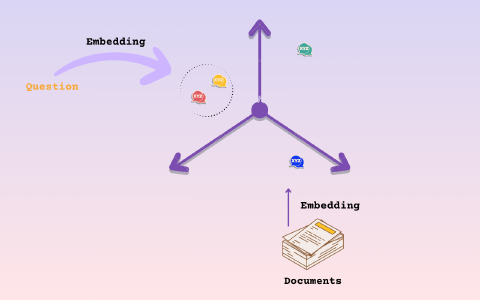

With the documents indexed, the next step is to fetch the ones that match a user’s query. This stage can be better visualized as a three-dimensional (3D) space where each document has a point, with its location determined by its content or semantic meaning.

Documents with similar content will be located close to each other in this space. This core idea forms the foundation for many search and retrieval methods we see in today’s vector stores.

Specifically, we take our documents and embed them in a 3D space, then we take our query and project it into the same space. After that, we simply search around the query for nearby documents and retrieve those that are closest.

You may think of this 3D space as somewhere where we can ask a question like, “What documents are near me?”, then retrieve those nearby documents because they may relate to our query.

So, we can choose a specific number of documents that we want to be close to our query in this embedding space. There are many different methods that can effectively implement this. Embedding models, document loaders, splitters and other tools can be used in various combinations to test and compare different indexing and retrieval techniques.

Generation

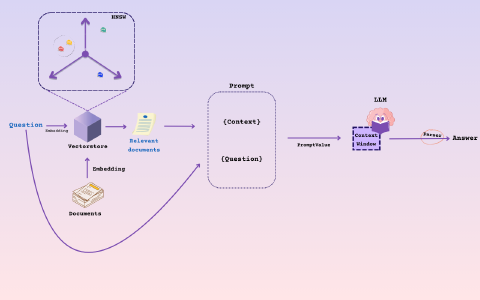

Finally, once we’ve retrieved relevant splits for our query, we pack them into the context window to generate the answer. This is where we introduce the concept of a ‘prompt’.

The prompt is essentially a container that we will fill with a system prompt, the retrieved documents and the user’s question. You can see from the image above how we can create a dictionary from our question and the retrieved documents, then use that dictionary to populate the prompt template with values.

Once completed, the prompt becomes a value that can be passed on to an NLP model, such as an LLM, resulting in a prediction that we then parse into a string to obtain our answer.

Why RAG is important

The introduction of models like Gemini 1.5 Pro, with its 1M-context window, certainly raises questions about the relevance of the RAG approach in today’s technological landscape. It’s evident that many small datasets can be accommodated within Gemini’s context window. And the cost and processing speed of tokens are expected to improve over time. However, there are still a few challenges that the existing RAG approach could address more effectively:

Domain-specific customization: Many companies have unique and confidential data that is crucial for their operations. RAG allows language models to incorporate this data into applications, then generate responses based on the company’s exclusive information.

Businesses often focus on particular fields that have their own unique jargon and complexities. RAG’s flexibility allows for customization, making it possible to tailor applications that meet the specific needs and language requirements of any industry. Our AI consulting team regularly helps organizations design RAG architectures for domain-specific use cases.

Real-time updates: Markets are dynamic, and keeping up with the latest information is crucial. RAG enables LLMs to access the most recent data from various sources, like news outlets or databases, ensuring that generated content is up to date.

Transparency and source citing: Citing sources and maintaining transparency is crucial, especially in sectors where precision matters, like legal or financial fields. Applications based on RAG can reference the data sources they use, increasing the credibility and accountability of the company.

**Handling large document sets:**Returning to Gemini’s context window, while 10 million tokens may seem like a huge number, in practice it is equivalent to around 40 megabytes of data, which would not be enough for large document corpora. It could be enough for some ‘small’ use cases, but many proprietary corpora can be terabytes in size. So, building LLM-powered systems would require retrieving this data in order to augment language models with contextual information.

Embedding model limitations: The current embedding models, essential for data retrieval, have limitations in handling long text segments. This restricts the amount of context that can be considered in one go, which impacts the efficiency of information retrieval.

Cost and computational requirements: RAG offers a cost-effective solution by augmenting existing LLMs with external data, rather than building new models from scratch. However, with bigger, more complex tasks, such as data retrieval and context window management, the process can still be resource-intensive and expensive.

Examples of RAG in practice

To take full advantage of long-context LLMs, one needs to adopt new architectures that fully utilize their capabilities and work around remaining limitations. This section introduces some potential approaches for implementing these.

In basic RAG setups, we usually start by embedding a large piece of text, then use that to generate a response. However, this approach isn’t always effective; large texts may contain unnecessary information that obscures the key details and reduces the efficiency of information retrieval.

Imagine if we could split these texts into smaller, more targeted sections, without losing the contextual thread necessary for generating accurate responses. By distinguishing chunks for retrieval from those for generation, we can enhance the system’s precision. In particular, while smaller chunks could help bypass the limitations of current embedding models, keeping larger segments for context provides a more thorough understanding.

The goal of the small-to-large retrieval strategy is to use small segments for retrieval, then supply the LLM with the bigger context from which these segments were extracted.

Basic RAG

Let’s take a closer look at the implementation of a basic RAG pipeline.

Step 1. Loading Documents

First, we need to load the document you are interested in analyzing. For example, we can download a famous paper, Attention Is All You Need, from arXiv and process it using a PDFReader loader. Once the file is here, we would combine all its pages into a single Document object.

# Import necessary libraries

import arxiv

from pathlib import Path

from llama_index.core import Document

from llama_index.readers.file import PDFReader

# Download the paper with its arXiv ID

paper = next(arxiv.Client().results(arxiv.Search(id_list=["1706.03762"])))

# Ensure the filename is correctly specified with the intended path

paper.download_pdf(filename="./attention.pdf") # This saves the paper locally

# Prepare the document for processing

loader = PDFReader()

documents = loader.load_data(file=Path('./attention.pdf'))

doc_text = "\n\n".join([d.get_content() for d in documents])

docs = [Document(text=doc_text)]

Step 2. Parsing Documents into Text Chunks (Nodes)

Next, we split it into smaller sections, or Nodes, for easier handling. We typically break them into chunks of 1,024 characters (e.g, chunk_size=1024).

from llama_index.core import Settings

from llama_index.core.node_parser import SentenceSplitter

node_parser = SentenceSplitter(chunk_size=1024)

doc_nodes = Settings.node_parser.get_nodes_from_documents(docs)

for idx, node in enumerate(doc_nodes):

node.id_ = f"node-{idx}"

>> [TextNode(

id_='node-0',

embedding=None,

metadata={},

excluded_embed_metadata_keys=[],

excluded_llm_metadata_keys=[],

relationships={<NodeRelationship.SOURCE: '1'>:

RelatedNodeInfo(node_id='132438e6-8ff4-4d16-ba3f-f46df80d8f41',

node_type=<ObjectType.DOCUMENT: '4'>,

metadata={},

hash='13a349ba2062ead42d3608ecb2b0ad8c9cbb29a1f5f1898e4356f0011ad3855e'),

<NodeRelationship.NEXT: '3'>:

RelatedNodeInfo(

node_id='1b15ac46-9085-4341-b9ae-f8fb32b317da',

node_type=<ObjectType.TEXT: '1'>,

metadata={},

hash='f815e4a4db45ea87e7a3f393850afbb214a7262b3fc5fa38346e8eba6224c0e3')},

text='Here goes your PDF text',

start_char_idx=0,

end_char_idx=4586,

text_template='{metadata_str}\n\n{content}',

metadata_template='{key}: {value}', metadata_seperator='\n') …]

Step 3. Select Embedding Model and LLM

Here, we choose models for two purposes:

- Embedding Model: Creates vector embeddings from text chunks, helping with identifying and understanding similarities. In our example, we are calling the

text-embedding-3-smallmodel fromOpenAIEmbedding. - LLM: Takes both the user’s query and relevant text chunks to generate answers that are context-aware.

We can wrap these two models together under the Settings environment and call them later in the indexing and querying steps.

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.llms.openai import OpenAI

from llama_index.core.settings import Settings

Settings.llm = OpenAI(model="gpt-3.5-turbo")

Settings.embed_model = OpenAIEmbedding(model="text-embedding-3-small")

Step 4. Create Index, Retriever and Query Engine

The final step sets up the system to respond to queries by:

- Indexing: Prepares the data with

VectorStoreIndexso it can be searched efficiently.

# Create an index for text chunks

vector_index = VectorStoreIndex(doc_nodes)

- Retrieving: Finds the most relevant information based on the user’s query.

# Setup the retriever for fetching information

vector_retriever = vector_index.as_retriever(similarity_top_k=2)

- Query Engine (e.g.

RetrieverQueryEngine): Acts as the interface for asking questions and finding answers.

# Configure the query engine

response_synthesizer = get_response_synthesizer(response_mode="compact")

query_engine = RetrieverQueryEngine.from_args(

vector_retriever , response_synthesizer=response_synthesizer)

# Example query

response = query_engine.query("What is Attention?")

print(str(response))

The output would be the following:

The attention function can be described as mapping a query and a set of key-value pairs to an output — where the output is computed as a weighted sum of the values, based on the compatibility function of the query with the corresponding key. The attention mechanism allows the model to focus on different parts of the input sequence, with varying levels of importance during the translation process.

Advanced Method: Sentence Window Retrieval

To gain more detailed information, we could break down the documents into individual sentences. Each sentence would act as a small amount of information, similar to a mini-document.

Imagine each sentence has a ‘context window’ of additional sentences surrounding it, like neighbors. This would help language models understand the sentence better, by providing context. It would be like not just focusing on one sentence, but also looking at some neighboring text before and after that sentence, to get a more complete picture.

Step 1: Preparing the Sentences and their Contexts

First, we set up a system to split the document into sentences and then create a context window for each sentence.

# Setting up the parser to identify sentences and their context windows

node_parser = SentenceWindowNodeParser.from_defaults(

window_size=3,

window_metadata_key="window",

original_text_metadata_key="original_text",

)

# Splitting the text into sentences

text_splitter = SentenceSplitter()

Settings.text_splitter = text_splitter

# Extracting sentences and their contexts from the documents

sentence_nodes = node_parser.get_nodes_from_documents(docs)

base_nodes = text_splitter.get_nodes_from_documents(docs)

# Preparing the sentences for retrieval

sentence_index = VectorStoreIndex(sentence_nodes)

Step 2: Setting Up the Query System

Next, we create a system to find the best matching sentences and their contexts, based on a given question.

from llama_index.core.postprocessor import MetadataReplacementPostProcessor

# Setting up the query engine with a focus on retrieving sentence contexts

query_engine = sentence_index.as_query_engine(

similarity_top_k=2,

# the target key defaults to `window` to match the node_parser's default

node_postprocessors=[

MetadataReplacementPostProcessor(target_metadata_key="window")

],

)

window_response = query_engine.query("What is the Attention?")

print(window_response)

The answer from our LLM:

The attention is a mechanism that relates different positions of a single sequence in order to compute a representation of the sequence.

Intelligent Routing

Another promising solution to improve the RAG effectiveness of LLMs, especially those dealing with long contexts, is the implementation of an intelligent routing layer.

LLMs with a broad context window bring us face-to-face with a crucial dilemma: how much context is exactly right for each unique case? Adding too much context can lead to significant real-world issues, like increased costs and slower response times, which may not be worth it for every question or task. Although we might see these challenges diminish as technology advances, finding a smart balance is essential in the meantime. An intelligent routing layer offers a practical solution to this problem.

The effectiveness of existing RAG methods, including techniques like top-k retrieval and synthesis, varies greatly depending on the query. While these methods work well for questions that require specific information, they can struggle with queries that demand detailed summaries or several components. In such cases, a more nuanced approach is needed — perhaps incorporating all relevant context directly into the question prompt, or using a ‘chain of thought’ method that combines retrieval with reasoning.

An intelligent routing system should therefore be built on top of multiple RAG and LLM synthesis processes within a database. This system would aim to identify the most efficient and effective strategy for retrieving relevant context when faced with a specific question. Doing so ensures a solution that is both flexible and economically viable.

Step 1. Loading Documents Again

Start by loading documents again. For the purposes of this example you can repeat the document loading process from the previous example.

Step 2. Define Indexes

Define both a vector index and summary index over this data, similar to Step 1.

splitter = SentenceSplitter(chunk_size=1024)

vector_index = VectorStoreIndex.from_documents(

docs, transformations=[splitter]

)

summary_index = SummaryIndex.from_documents(

docs, transformations=[splitter]

)

Step 3. Define RouterQueryEngine

class RouterQueryEngine(CustomQueryEngine):

"""Use our Pydantic program to perform routing."""

query_engines: List[BaseQueryEngine]

choice_descriptions: List[str]

verbose: bool = False

router_prompt: PromptTemplate

llm: OpenAI

summarizer: TreeSummarize = Field(default_factory=TreeSummarize)

def custom_query(self, query_str: str):

"""Define custom query."""

program = OpenAIPydanticProgram.from_defaults(

output_cls=Answers,

prompt=router_prompt1,

verbose=self.verbose,

llm=self.llm,

)

choices_str = get_choice_str(self.choice_descriptions)

output = program(context_list=choices_str, query_str=query_str)

# print choice and reason, and query the underlying engine

if self.verbose:

print(f"Selected choice(s):")

for answer in output.answers:

print(f"Choice: {answer.choice}, Reason: {answer.reason}")

responses = []

for answer in output.answers:

choice_idx = answer.choice - 1

query_engine = self.query_engines[choice_idx]

response = query_engine.query(query_str)

responses.append(response)

# if a single choice is picked, we can just return that response

if len(responses) == 1:

return responses[0]

else:

# if multiple choices are picked, we can pick a summarizer

response_strs = [str(r) for r in responses]

result_response = self.summarizer.get_response(

query_str, response_strs

)

return result_response

Let’s narrow down our choices to the field of the chosen paper:

choices = [

"Useful for answering questions about specific sections of the Attention layer paper",

"Useful for questions that ask for a summary of the whole paper",

]

router_query_engine = RouterQueryEngine(

query_engines=[vector_query_engine, summary_query_engine],

choice_descriptions=choices,

verbose=True,

router_prompt=router_prompt1,

llm=OpenAI(model="gpt-4"),

)

Step 4. Let’s test it

At this step we would ask our program to choose a route in order to answer our question:

response = router_query_engine.query("What is the Attention?")

>>> Function call: Answers with args: {

"answers": [

{

"choice": 1,

"reason": "The question asks about a specific concept ('Attention') which is likely to be covered in a specific section of the paper."

}

]

}

After, correctly picking up the right direction, LLM answered the following:

Selected choice(s):

Choice: 1, Reason: The question asks about a specific concept ('Attention') which is likely to be covered in a specific section of the paper.

The attention mechanism is a key component in sequence modeling and transduction models that allows for modeling dependencies, without considering their distance in the input or output sequences. It is typically used in conjunction with recurrent networks. But in the proposed Transformer model, attention is utilized as the sole mechanism to establish global dependencies between input and output, without the need for recurrence.

Let’s also test it with another question:

response = router_query_engine.query("Can you give a summary of this paper?")

>>> Function call: Answers with args: {

"answers": [

{

"choice": 2,

"reason": "This choice is about providing a summary of the whole paper, which directly answers the question."

}

]

}

Selected choice(s):

Choice: 2, Reason: This choice is about providing a summary of the whole paper, which directly answers the question.

>>>The paper presents the Transformer model, a novel architecture that relies on attention mechanisms for sequence transduction tasks. It eliminates the need for recurrence and convolutions, utilizing stacked self-attention and point-wise fully connected layers in both the encoder and decoder. This design enables more parallelization, reduces training time and achieves top-notch results in translation tasks. The model employs multi-head attention to jointly process information from various representation subspaces at different positions, along with self-attention, position-wise feed-forward networks, embeddings and softmax functions.

Conclusions

RAG stands out as a transformative approach, bridging the gap between the vast repository of human knowledge and LLMs computational power. By integrating indexing, retrieval and generation stages, RAG not only improves response accuracy and relevance, but also introduces flexibility for domain-specific customization and real-time updates.

As we address the challenges and limitations of embedding models and computational requirements, the practical implementations of RAG across industries highlights its potential usefulness in information retrieval. Our LLM consulting team helps organizations implement RAG solutions tailored to their specific use cases.