A Comparison of Reinforcement Learning Frameworks

by Dr. Phil Winder , CEO

Reinforcement Learning (RL) frameworks help engineers by creating higher level abstractions of the core components of an RL algorithm. This makes code easier to develop, easier to read and improves efficiency.

But choosing a framework introduces some amount of lock in. An investment in learning and using a framework can make it hard to break away. This is just like when you decide which pub to visit. It’s very difficult not to buy a beer, no matter how bad the place is.

My New Book on Reinforcement Learning

Do you want to use RL in real-life, business applications? Do you want to learn the nitty-gritty? The best practices?

I've written a book for O'Reilly on Reinforcement Learning. It focuses on industrial RL, with lots of real-life examples and in-depth analysis.

Find out more on https://rl-book.com.

In this post I provide some notes about the most popular RL frameworks available. I also present some crude summary statistics from Github and Google (which you can’t trust) to attempt to quantify their popularity.

Original Purpose of this Work

I am writing a book on RL for O’Reilly. As a part of that book, I want to demonstrate to my readers how to build and design various RL agents. I think the readers will benefit by using code from an already-established framework or library. And in any case, the people writing these frameworks would probably do a better job than I could anyway.

So the question was, “which framework?”. Which led me down this path. It started with a few frameworks, but then I found more. And more. And it turns out there are quite a lot of frameworks already available, which turned this into a 8000 word monster. Apologies in advance for the length. I don’t expect many people to read all of it!

Because of the length, this also took a while to write. This means that the reviews don’t have laser-focus. Sometimes I comment about one thing in one framework and not at all in another. Apologies for this; it was not intended to be exhaustive.

Methodology

Most of the evaluation is pure opinion. But there are a few quantitative metrics we can look at. Namely the statistics of the repository that are made available in Github. Starts roughly represent how well known each of the frameworks are, but they do not represent quality. More often than not the frameworks with the most stars have more marketing power.

After that, I was looking for a combination of modularity, ease of use, flexibility and maturity. Simplicity was also desired, but this is usually mutually exclusive with modularity and flexibility. The opinions presented below are based upon these ideals.

One reoccurring theme is the dominance of Deep Learning (DL) frameworks within the RL framework. Quite often the DL framework would breakout above the abstractions and the RL framework would just be an extension of the former. This means that if you are in a situation where you are already in bed with a particular DL solution, then you might as well stick with that.

But to me, that represents lock-in. My preference would always tend towards the frameworks that don’t mandate a specific DL implementation or don’t use DL at all (shock/horror!). The result is that all the Google frameworks tend towards Tensorflow, all the academic frameworks use PyTorch then there are a few brave souls dangling in-between with twice as much work as everyone else.

I also attempted to look at the Google rankings for each framework, but that turned out to be unreliable.

Accompanying Notebook

Where I could, or where it made sense, I tried out a lot of these frameworks. Many of them didn’t work. And some of them had excellent Notebooks to begin with, so you can just check those out.

For the rest, I have published a gist that you can run on Google Colabratory. This is presented in a very raw format. It is not meant to be comprehensive or explanatory. I simply wanted to double check that in the simplest of cases, it worked.

In each section I also present a “Getting Started” sub-heading that demonstrates the basic example from each framework. This code is from the Notebook.

Reinforcement Learning Frameworks

The following frameworks are listed in order of the number of stars in their Github repository as of June 2019. The actual number of stars and other metrics are presented as badges just below the title of each framework.

The following frameworks are compared:

- OpenAI Gym

- Google Dopamine

- RLLib

- Keras-RL

- TRFL

- Tensorforce

- Facebook Horizon

- Nervana Systems Coach

- MAgent

- SLM-Lab

- DeeR

- Garage

- Surreal

- RLgraph

- Simple RL

OpenAI Gym

OpenAI is a not-profit, pure research company. The provide a range of open-source Deep and Reinforcement Learning tools to improve repeatability, create benchmarks and improve upon the state of the art. I like to think of them as a bridge between academia and industry.

But I know what you’re thinking. “Phil, Gym is not a framework. It is an environment.”. I know, I know. It provides a range of toy environments, classic control, robotics, video games and board games to test your RL algorithm against.

But I have included it here because it is used so often as the basis for custom work. People use it like a framework. Think of it as an interface between an RL implementation and the environment. It is so prolific, many of the other frameworks listed below also interface with Gym. Furthermore it acts as a baseline as to compare everything against. Since this is one of the most popular repositories in RL.

Getting Started

Gym is both cool and problematic because of it’s realistic 3D environments. If you want to visualise what is going on you need to be able to render these environments. It pretty much works on your laptop, but struggles when you try and run it in a Notebook because of limitations with the browser.

To work around this, you have to use a virtual display. Basically we have to mock out the video driver. This means most of the “getting started” code is video wrapping code.

If we ignore all the boring stuff, which you can find in the accompanying Notebook, the core gym code looks like:

import gym

from gym.wrappers.monitoring.video_recorder import VideoRecorder # Because we want to record a video

env = gym.make("CartPole-v1") # Create the cartpole environment

rec = VideoRecorder(env) # Create the video recorder

rec.capture_frame() # Capture the starting position

while True:

action = env.action_space.sample() # Use a random action

observation, reward, done, info = env.step(action) # to take a single step in the environment

rec.capture_frame() # and record

if done:

break # If the pole has fallen, quit.

rec.close() # Close the recording

env.close() # Close the environment

As you can see, we are performing actions until the pole has fallen. This simple API is repeated for all environments. This has become so popular that people have built extensions that use the same API but with new environments.

As you can see, it falls straight away because we’re just passing random actions at the moment. But still, there’s something hypnotic, something drum-and-bass about it.

But now let’s look at some agent framework only options.

Google Dopamine

Google Dopamine: “Not an Official Google product” (NOGP - an acronym I’m going to coin now) but written by Google employees and hosted on Google github. So, Google Dopamine then. It is a relatively new entrant to the RL framework space that appears to have been a hit. It boasts a large number of Github stars and some amount of Google trend ranking. This is especially surprising because of the limited number of commits, committers and time since the project was launched. Clearly it helps you have Google’s branding and marketing department.

Anyway, the cool thing about this framework is that it emphasises configuration as code through it’s use of the Google gin-config configuration framework. The idea is that you have lots of pluggable bits that you plumb together through a configuration file. The benefit is that this allows people to release a single configuration file that contains all of the parameters specific to that run. And gin-config makes things special because it allows you to plumb together objects; instances of classes and lambdas and things like that.

The downside is that you increase the complexity in the configuration file and it can end up like just another file full of code that people can’t understand because they are not used to it. Personally I would always stick to a “dumb” configuration file like Kubernetes Manifests or JSON (like many of the other frameworks), for example. The wiring should be done in the code.

The one major benefit is that it promotes plugability and reuse, which are key OOP and Functional concepts that are often ignored when developing [Data Science](/blog/2017/170705-what-is-data-science.md

It is clear that it has gained a significant amount of traction in a very short time. And frankly, that worries me a bit. There are four contributors and only 100 commits. Of those four people, three are from the community (bug-fixes, etc.). This leaves one person. And this one person has committed, wait for it, over 1.3 million lines of code.

Clearly there’s something fishing going on here. From the commit history it looks like the code was transferred from another repo. A 1.2 million line commit isn’t exactly best practice! :-) It’s Apache licensed, so there’s nothing too strange going on but the copyright has been assigned to Google Inc.. But I’m reassured by the contributor agreement.

In terms of modularity, there isn’t much. There isn’t any abstraction for the Agents; they are implemented directly and configured from the gin config. There’s not many implemented either. There isn’t any official abstraction of an environment either. In fact, it looks like they are just passing back core Tensorflow objects everywhere and assuming using the Tensorflow interface. In short, very little official OOP-style abstraction, which is different to most of the other frameworks.

In short, little modularity, reuse is clunky (IMO) and although it appears to be popular, it isn’t very mature and doesn’t have community support.

Getting Started

Again you can find the example in the accompanying Notebook, but the premise is to build your RL algorithm via a configuration file. This is what it looks like:

DQN_PATH = os.path.join(BASE_PATH, 'dqn')

# Modified from dopamine/agents/dqn/config/dqn_cartpole.gin

dqn_config = """

# Hyperparameters for a simple DQN-style Cartpole agent. The hyperparameters

# chosen achieve reasonable performance.

import dopamine.discrete_domains.gym_lib

import dopamine.discrete_domains.run_experiment

import dopamine.agents.dqn.dqn_agent

import dopamine.replay_memory.circular_replay_buffer

import gin.tf.external_configurables

DQNAgent.observation_shape = %gym_lib.CARTPOLE_OBSERVATION_SHAPE

DQNAgent.observation_dtype = %gym_lib.CARTPOLE_OBSERVATION_DTYPE

DQNAgent.stack_size = %gym_lib.CARTPOLE_STACK_SIZE

DQNAgent.network = @gym_lib.cartpole_dqn_network

DQNAgent.gamma = 0.99

DQNAgent.update_horizon = 1

DQNAgent.min_replay_history = 500

DQNAgent.update_period = 4

DQNAgent.target_update_period = 100

DQNAgent.epsilon_fn = @dqn_agent.identity_epsilon

DQNAgent.tf_device = '/gpu:0' # use '/cpu:*' for non-GPU version

DQNAgent.optimizer = @tf.train.AdamOptimizer()

tf.train.AdamOptimizer.learning_rate = 0.001

tf.train.AdamOptimizer.epsilon = 0.0003125

create_gym_environment.environment_name = 'CartPole'

create_gym_environment.version = 'v0'

create_agent.agent_name = 'dqn'

TrainRunner.create_environment_fn = @gym_lib.create_gym_environment

Runner.num_iterations = 100

Runner.training_steps = 100

Runner.evaluation_steps = 100

Runner.max_steps_per_episode = 200 # Default max episode length.

WrappedReplayBuffer.replay_capacity = 50000

WrappedReplayBuffer.batch_size = 128

"""

gin.parse_config(dqn_config, skip_unknown=False)

That’s quite a lot. But it’s implementing a more complicated algorithm so we might expect that. I’m quite happy about the hyperparameters being in there, but I’m not sure that I am a fan of all the dynamic injection (the @ denotes an instance of a class). Proponents would say that “wow, look, I can just swap out the optimiser just by changing this line”. But I’m of the opinion that I could do that with plain old Python too.

After a bit of training:

tf.reset_default_graph()

dqn_runner = run_experiment.create_runner(DQN_PATH, schedule='continuous_train')

dqn_runner.run_experiment()

Then we can run some similar code to before to generate a nice video:

rec = VideoRecorder(dqn_runner._environment.environment)

action = dqn_runner._initialize_episode()

rec.capture_frame()

while True:

observation, reward, is_terminal = dqn_runner._run_one_step(action)

rec.capture_frame()

if is_terminal:

break # If the pole has fallen, quit.

else:

action = dqn_runner._agent.step(reward, observation)

dqn_runner._end_episode(reward)

rec.close()

RLLib via ray-project

Ray started life as a project that aimed to help Python users build scalable software, primarily for ML purposes. Since then it has added several modules that are dedicated to specific ML use cases. One is distributed hyperparameter tuning and the other is distributed RL.

The consequence of this generalisation is that the popularity numbers are probably more due to the hyperparameter and general purpose scalability use case, rather than RL. Also, the distributed focus of the library means that the agent implementations tend to be those that are inherently distributed (e.g. A3C) or are attempting to solve problems that are so complex they need distributing so that they don’t take years to converge (e.g. Rainbow).

Despite this, if you are looking to productionise RL, or if you are repeating training many times for hyperparameter tuning or environment improvements, then it probably makes sense to use ray to be able to scale up and reduce feedback times. In fact, a number of other frameworks (specifically: SLM-Lab and RLgraph) actually use ray under the hood for this purpose.

I believe there is a strong applicability to RL here. The clear focus on distributed computation is good.The sheer number of commits and contributors is also reassuring. But there is a lot of the underlying code in C++. Some even in Java. Only 60% is python.

Despite this there is a very clear abstraction for Policys, a nice, almost functional interface for agents called Trainers (see the DQN implementation for an example of its usage), a Model abstraction that allows the use of PyTorch or Tensorflow (yay!) and a few more for evaluation and policy optimisation.

Overall the documentation is excellent and clear architectural drawings are presented (see this example, for example). It is modular, scales well and is very well supported and accepted by the community. The only downside is the complexity of it all. That’s the price you pay for all this functionality.

Getting Started

There is an issue with the version of pyarrow preinstalled in Google colab that isn’t compatible with ray. You have to uninstall the preinstalled version and restart the runtime, then it works.

I also couldn’t get the video rendering working in the same way we made the previous examples work. My hypothesis is that because they are running in separate processes they don’t have access to the fake pyvirtualdisplay device.

So despite this, let us try an example:

!pip uninstall -y pyarrow

!pip install tensorflow ray[rllib] > /dev/null 2>&1

After you remove pyarrow and install rllib, you must restart the Notebook kernel. Next, import ray:

import ray

from ray import tune

ray.init()

And run a hyperparameter tuning job for the Cartpole environment using a DQN:

tune.run(

"DQN",

stop={"episode_reward_mean": 100},

config={

"env": "CartPole-v0",

"num_gpus": 0,

"num_workers": 1,

"lr": tune.grid_search([0.01, 0.001, 0.0001]),

"monitor": False,

},

)

There is a lot of syntactic sugar here, but it looks reasonably straightforward to customise the training functionality (docs).

Keras-RL

I love Keras. I love the abstraction, the simplicity, the anti-lock-in. When you look at the code below you can see the Keras magic. So you would think that keras-rl would be a perfect fit. However it doesn’t seem to have obtained as much traction as the other frameworks. If you look at the documentation, it’s empty. When you look at the commits there only a few brave souls that have done most of the work. Compare this to the main Keras project.

And I think I might know why. Keras was built from the ground up to allow users to quickly prototype different DL structures. This relied on the fact that the Neural Network primitives could be abstracted and modular. But when you look at the code for keras-rl, it’s implemented like it is in the textbooks. Each agent has it’s own implementation despite the similarities between SARSA and DQN, for example. Think of all the “tricks” that could be modularised, tricks like those that are used for Rainbow, which could allow people to experiment using these tricks in other agents. There is some level of modularity, but I think it is at a level that is too high.

But maybe it’s not too late, because there is so much promise here. If there were enough people interested, or maybe if there was more support from the core Keras project, then maybe this could be the go-to RL framework of the future. But for now, I don’t think it is. It’s almost as easy to obtain the benefits of Keras by using other frameworks that we have already discussed.

Getting Started

The examples worked out of the box here and the only modifications I made were to use a mock Display and add some video recording of the tests. You can see that most of the code here is standard Keras code. The additions by Keras-RL aren’t really Keras related at all.

import numpy as np

import gym

from keras.models import Sequential

from keras.layers import Dense, Activation, Flatten

from keras.optimizers import Adam

from rl.agents.dqn import DQNAgent

from rl.policy import BoltzmannQPolicy

from rl.memory import SequentialMemory

ENV_NAME = 'CartPole-v0'

# Get the environment and extract the number of actions.

env = gym.make(ENV_NAME)

np.random.seed(123)

env.seed(123)

nb_actions = env.action_space.n

# Next, we build a very simple model.

model = Sequential()

model.add(Flatten(input_shape=(1,) + env.observation_space.shape))

model.add(Dense(16))

model.add(Activation('relu'))

model.add(Dense(16))

model.add(Activation('relu'))

model.add(Dense(16))

model.add(Activation('relu'))

model.add(Dense(nb_actions))

model.add(Activation('linear'))

print(model.summary())

# Finally, we configure and compile our agent. You can use every built-in Keras optimizer and

# even the metrics!

memory = SequentialMemory(limit=5000, window_length=1)

policy = BoltzmannQPolicy()

dqn = DQNAgent(model=model, nb_actions=nb_actions, memory=memory, nb_steps_warmup=10,

target_model_update=1e-2, policy=policy)

dqn.compile(Adam(lr=1e-3), metrics=['mae'])

# Okay, now it's time to learn something! We visualize the training here for show, but this

# slows down training quite a lot. You can always safely abort the training prematurely using

# Ctrl + C.

dqn.fit(env, nb_steps=2500, visualize=True, verbose=2)

# After training is done, we save the final weights.

dqn.save_weights('dqn_{}_weights.h5f'.format(ENV_NAME), overwrite=True)

# Finally, evaluate our algorithm for 5 episodes.

dqn.test(Monitor(env, '.'), nb_episodes=5, visualize=True)

TRFL

TRFL is an opinionated extension to Tensorflow by Deepmind (so NOGP then ;-) ). Given the credentials you would have expected it to be popular, but the first thing you notice is the distinct lack of commits. Then the distinct lack of examples and Tensorflow 2.0 support.

The main issue is that it is too low-level. It’s the exact opposite of Keras-RL. The functionality that TRFL provides is a few helper functions, a q-learning value function for example, which takes in a load of Tensorflow Tensors with abstract names.

Getting Started

I recommend taking a quick look at this Notebook as an example. But note that the code is very low level.

Tensorforce

Tensorforce has similar aims to TRFL. It attempts to abstract RL primitives whilst targeting Tensorflow. By using Tensorflow then you gain all of the benefits of using Tensorflow, i.e. graph models, easier, cross-platform deployment.

There are four high-level abstractions of an Environment, Runner, Agent and Model. These mostly do what you would expect, but the “Model” abstraction is not something you would normally see. A Model sits within an Agent and defines the policy of the agent. This is nice because, for example, the standard Q-learning model can be overridden by the Q-learning n-step model, only changing one small function. This is precisely the middle ground between TRFL and Keras that I was looking for. And it’s implemented in an OOP way, which some people will like, others wont. But at least the abstraction is there.

The downside of libraries like this, or any DL focused RL library, is that much of the code is complicated by the underlying DL framework. The same is true here. For example, the random model, i.e. one that chooses a random action, something that should take precisely one line of code, is 79 lines long. I’m being a little facetious here (license, class boilerplate, newlines, etc.) but hopefully you understand my point.

It also means that there is no implementation of “simple” RL algorithms, i.e. those that don’t use models. E.g. entropy, bandits, simple MDPs, SARSA, some tabular methods, etc. And the reason for this is that you don’t need a DL framework for these models.

So in summary, I think the level of abstraction is spot on. But the benefits/problems of limiting yourself to a DL framework remain.

Note that this is based upon version 0.4.3 and a major rewrite is underway.

Getting Started

The getting started example is sensible. We’re creating an environment, an agent and a runner (the thing that actually does the training). The specifications for the agent is a little different though. It reminds me of the Dopamine Gin config, except it’s using standard json. In the example I’m getting those specifications from their examples directory, but you can imagine how easy it would be to run a hyperparameter search using them.

environment = OpenAIGym(

gym_id="CartPole-v0",

monitor=".",

monitor_safe=False,

monitor_video=10,

visualize=True

)

with urllib.request.urlopen("https://raw.githubusercontent.com/tensorforce/tensorforce/master/examples/configs/dqn.json") as url:

agent = json.loads(url.read().decode())

print(agent)

with urllib.request.urlopen("https://raw.githubusercontent.com/tensorforce/tensorforce/master/examples/configs/mlp2_network.json") as url:

network = json.loads(url.read().decode())

print(network)

agent = Agent.from_spec(

spec=agent,

kwargs=dict(

states=environment.states,

actions=environment.actions,

network=network

)

)

runner = Runner(

agent=agent,

environment=environment,

repeat_actions=1

)

runner.run(

num_timesteps=200,

num_episodes=200,

max_episode_timesteps=200,

deterministic=True,

testing=False,

sleep=None

)

runner.close()

Horizon

Horizon is a framework from Facebook that is dominated by PyTorch. Another DL focused library then. Also:

The main use case of Horizon is to train RL models in the batch setting. … Specifically, we try to learn the best possible policy given the input data.

So like other frameworks the focus is off-policy, model driven RL with DL in the model. But this is differentiated due to the use of PyTorch. You could also compare this to Keras-RL using PyTorch as the backend for Keras.

I’ve already discussed the cons of such a focused framework in the Tensorforce section, so I won’t state them again.

There are several interesting differences though. There is no tight Gym integration. Instead they intentionally decouple the two by dumping Gym data into JSON, then reading the JSON back into the agent. This might sound verbose, but is actually really good for decoupling and therefore more scalable, less fragile and more flexible. The downside is that there are more hoops to jump through due to the increased complexity.

However, as inconceivable as it sounds, there is no pip installer for Horizon. You have to use conda, install onnx, install java, setup the JAVA_HOME to point to conda, install Spark, install Gym (fair enough), install Apache thrift and then build Horizon. Wow. (Bonus points if you counted how many steps that was).

So I think it suffice to say that I’m not going to attempt to install this in the demo Notebook.

Getting Started

You’ll need a lot of time and a lot of patience. Follow the build instructions, then follow the training guide. I can’t vouch for it because I have a life to get on with.

Coach

The first thing you will notice when you look at this framework is the number of implemented algorithms. It is colossal and must have taken several people many weeks to implement. The second thing you will notice is the number of integrated Environments. Considering how much time this must have taken, it gives a lot of hope for the rest of the framework.

It comes with a dedicated dashboard which looks pretty nice. Most of the other frameworks rely on the Tensorboard project.

One particular wow feature that I haven’t seen before, is inbuilt deployment for Kubernetes. I think that the orchestration of Coach by Coach is a step too far, but the fact that they’ve even thought about it means that it is probably scalable enough to deploy onto Kuberentes with standard tooling.

The level of modularity is astounding. For example there are classes that implement all sorts of exploration strategies and allow you to make all sorts of changes to the various model designs.

The ONLY thing that I can think of that is a little annoying is the same limitation of forcing me to use DL as the model. I remain convinced that a subset of simpler applications do not require anything nearly as complex as DL and could benefit for more traditional Regression methods. However, I’m sure it should be reasonably easy to add in a little stub class that removes the DL stuff.

Interestingly, the framework supports Tensorflow and MXNet due to it’s use of Keras. This means that PyTorch is not supported, because Keras doesn’t support PyTorch.

Frankly, I can’t understand why this framework is so unpopular in any way of measuring it. In terms of stars. In terms of the number of google pages (the answer is 7, if you are wondering). Compare that to Google Dopamine for example, with 16500 pages.

It is certainly the most comprehensive framework with the best documentation and a fantastic level of modularity. They’ve even got a ❤️ Getting Started Notebook ❤️.

Getting Started

There are two important notes I’d like to point out. First, make sure you are looking at a tagged version of the documentation or the demos. There are some new features in the master branch that don’t work with a pip installed version. Second, it depends on OpenAI Gym version 0.12.5, which isn’t installed in colab. You’ll need to run !pip install gym==0.12.5 and restart the runtime.

import tensorflow as tf

tf.reset_default_graph() # So that we don't get an error for TF when we re-run

from rl_coach.agents.clipped_ppo_agent import ClippedPPOAgentParameters

from rl_coach.environments.gym_environment import GymVectorEnvironment

from rl_coach.graph_managers.basic_rl_graph_manager import BasicRLGraphManager

from rl_coach.graph_managers.graph_manager import ScheduleParameters

from rl_coach.core_types import TrainingSteps, EnvironmentEpisodes, EnvironmentSteps

from rl_coach.base_parameters import VisualizationParameters

global experiment_path; experiment_path = '.' # Because of some bizzare global in the mp4 dumping code

# Custom schedule to speed up training. We don't really care about the results.

schedule_params = ScheduleParameters()

schedule_params.improve_steps = TrainingSteps(200)

schedule_params.steps_between_evaluation_periods = EnvironmentSteps(200)

schedule_params.evaluation_steps = EnvironmentEpisodes(10)

schedule_params.heatup_steps = EnvironmentSteps(0)

graph_manager = BasicRLGraphManager(

agent_params=ClippedPPOAgentParameters(),

env_params=GymVectorEnvironment(level='CartPole-v0'),

schedule_params=schedule_params,

vis_params=VisualizationParameters(dump_mp4=True) # So we can dump the video

)

MAgent

MAgent is a framework that allows you to solve many-agent RL problems. This is a completely different aim compared to all the other “traditional” RL frameworks that use only a single or very few agents. They claim it can scale up to millions of agents.

But again, no pip installer. Please, everyone, create pip installers for you projects. It’s vitally important for ease of use and therefore project traction. I guess it’s because the whole project is written in C, presumably for performance reasons.

It’s using Tensorflow under the hood and builds its own gridworld-like enironment. The Agents are designed with “real-life” simulations in mind. For example you can specify the size of the agent, how far it can see; things like that. The observations that are passed to the Agents are grids. The actions that they can take are limited to moving, attacking and turning. They are rewarded according to a flexible rule definition.

In short, the framework is setup to handle game of life style games out of the box, with some extra modularity on how the agents behave and are rewarded. Due to this we can use some of the more advanced DL methods to train the agents to perform complex, coordinated tasks. Like surround prey so that it can’t move. You can learn more in the getting started guide.

I am very impressed with the idea. But as you can see from the Github stats above, 4 commits a year basically means that it is hardly being used. The last major updates were in 2017. This is a shame, because it represents something very different compared to the other frameworks. It would be great if someone could make it easier to use, or replicate the framework in idiomatic Python, so that it becomes easier to use.

Getting Started

So I almost got it working in the Notebook. I tried a few examples from the getting started guide. The training version takes hours, so I bailed on that quite quickly. The examples/api_demo.py however is just testing the learnt models, so that is lightning quick.

However, it renders the environment in some proprietary text format. You need to run a random webserver binary that parses and hosts the render in the browser. Because we’re in colab, it doesn’t allow you to run a webserver. So I tried downloading the files, but we built the binaries on colab, not on a mac, so I wasn’t able to run the binary.

So that was a bit frustrating. It would have been much simpler if it had just rendered it in some standard format like mp4 of a gif or something. And also disappointing, because I was looking forward to generating some complex behavior.

But just so you are not disappointed, here is some eye candy from the author. Please excuse the audio!

And here are the remains of the code that worked:

!git clone https://github.com/geek-ai/MAgent.git

!sudo apt-get install cmake libboost-system-dev libjsoncpp-dev libwebsocketpp-dev

%cd MAgent

!bash build.sh

!PYTHONPATH=$(pwd)/python:$PYTHONPATH python examples/api_demo.py

You can exchange that last call to any of the python files in teh examples folder.

TF-Agents

Tensorflow-Agents (TF-Agents) is another NOGP from Google with the focus squarely on Tensorflow. So treat this as direct competition to TRFL, Tensorforce and Dopamine.

Which begs the question: why have more Google employees created another Tensorflow-abstraction-for-RL when TRFL and Dopamine already exist? In an issue discussing the relationship between TF-Agents and Dopamine the contributors suggest that:

it seems that Dopamine and TF-Agent strongly overlap. Although dopamine aims at being used for fast prototyping and benchmarking as the reproducibility has been put at the core of the project, whereas TF-Agent would be more used for production-level reinforcement learning algorithms.

To be honest, I’m not sure what “production-level” means at this point. There are some great colab examples, but there is no documentation. And you certainly shouldn’t be using Notebooks in production.

Once you start digging into the examples, then it becomes clear that the code is very Tensorflow heavy. For example, the simple Cartpole example has a lot of lines of code. Mainly because there is a lot of explanation and debuging code in there, but it looks like a sign of things to come.

I must admit though, the code does look very nice. It’s nicely separated and the modularity looks good. All the abstractions you would expect are there. The only thing I want to pick on is the Agent abstraction. This is the base class and it is directly coupled to Tensorflow. It is a Tensorflow module. This adds a significant amount of complexity and I wish that it was abstracted away so I didn’t have to worry about it until I need it. The same is true for the vast majority of other abstractions; they are all Tensorflow modules.

With that said, it is clear that this is a far more serious and capable library than TRFL.

Getting Started

There is already an extensive set of Notebooks available in their repository, so I won’t waste time just copy and pasting here. You can also run them directly in colab too.

The video below shows three episodes of the cartpole. To me it looks like it is having a fit. Constant nudges to rebalance.

SLM-Lab

SLM-Lab is modular RL framework based upon PyTorch. It appears to be aimed more towards researchers. They stress the importance of modularity, but rightly state that being simple and modular is probably not possible; it is a compromise between the two. Interestingly it also uses the Ray project under the hood to make it scalable.

Despite starting in 2017, the small number of contributors and the relative popularity in terms of github stars, there is lots of activity. The vast majority of the commits are one-liners, but the commitment by the authors is amazing.

Unfortunately this is another non-pip install framework and attempts to install a whole load of build related C libraries and miniconda. Which is problematic in colab. Being a good Engineer I ignored all the documentation and tried to get it working myself through trial and error. It almost worked, but I stumbled across a problem when initialising pytorch that I didn’t know how to fix.

So unfortunately, again, you will have to settle for an example image from the authors.

I struggled a bit with the documentation. The architecture documentation is limited and the rest is focused towards usage. But by usage I mean running experiments on current implementations. I struggled to find the documentation that told me how to plug modules together in different ways. I presume they intend that this should be done via the JSON spec files. Indeed the original motivation was:

There was a need for a framework that would allow us to compare algorithms and environments, quickly set up experiments to test hypotheses, reuse components, analyze and compare results, log results.

So the goal here is to allow reuse via configuration, much like Dopamine and Tensorforce. This “RL as configuration” seems to be a theme! However, I’m not convinced. I would argue that code is more idiomatic, more flexible. It is what people are used to. Every time you do something via configuration that is another Domain Specific Language (DSL) that users have to learn. And because that DSL is generally static (Gin is not) then the implementation of the DSL sets the limitation. It is never going to suit everyone because there will be an edge case that isn’t covered by the DSL.

DeeR

The initial impression of DeeR is a good one. It has a pip installer. It waxes lyrical about modularity. It has only two Python dependencies; numpy and Joblib. So no nasty C make process to get it working, great!

The documentation is clear, but is missing some overall architecture documentation. You have to dig into the classes/code to find the documentation. But when you do it is good.

The “modules” are split mostly in the way you would expect. Modules for the Environment, Agent, and Policies.

There is an interesting class called Controller which is not standard. This class provides lifecycle hooks that you can attach to; events like the end of an episode or whenever an action is taken. For example, if you wanted to do some logging at the end of an episode, then you could subclass this class and override the onEpisodeEnd. There are several example controllers, one of which is an EpsilonController. This allows you to dymaically alter the eta or epsilon value in e-greedy algorithms.

This is quite powerful as it allows you to alter the learning process in mid flight. But from a Software Engineering perspective this is quite risky. Any Functional Programmer will tell you not to mutate the state of another object because “Dragons be here”. It would have been nicer if the API was more functional and you could pass in functions that computed, for example, the agent’s next eta, rather than mutating the state of the agent directly. This may make it slightly more complex, though.

The framework also contains a few learning algorithms but it’s certainly not as comprehensive as something like Coach.

Getting Started

Thanks to the pip install and very few dependencies, this was probably the easiest framework to get up and running.

!pip install git+git://github.com/VINF/deer.git@master

!git clone https://github.com/VinF/deer.git

I cloned the git repo so I could run the examples. Then it’s just a case of importing everything:

%cd /content/deer/examples/toy_env

import numpy as np

from deer.agent import NeuralAgent

from deer.learning_algos.q_net_keras import MyQNetwork

from Toy_env import MyEnv as Toy_env

import deer.experiment.base_controllers as bc

And stealing the example:

rng = np.random.RandomState(123456)

# --- Instantiate environment ---

env = Toy_env(rng)

# --- Instantiate qnetwork ---

qnetwork = MyQNetwork(

environment=env,

random_state=rng)

# --- Instantiate agent ---

agent = NeuralAgent(

env,

qnetwork,

random_state=rng)

# --- Bind controllers to the agent ---

# Before every training epoch, we want to print a summary of the agent's epsilon, discount and

# learning rate as well as the training epoch number.

agent.attach(bc.VerboseController())

# During training epochs, we want to train the agent after every action it takes.

# Plus, we also want to display after each training episode (!= than after every training) the average bellman

# residual and the average of the V values obtained during the last episode.

agent.attach(bc.TrainerController())

# All previous controllers control the agent during the epochs it goes through. However, we want to interleave a

# "test epoch" between each training epoch. We do not want these test epoch to interfere with the training of the

# agent. Therefore, we will disable these controllers for the whole duration of the test epochs interleaved this

# way, using the controllersToDisable argument of the InterleavedTestEpochController. The value of this argument

# is a list of the indexes of all controllers to disable, their index reflecting in which order they were added.

agent.attach(bc.InterleavedTestEpochController(

epoch_length=500,

controllers_to_disable=[0, 1]))

# --- Run the experiment ---

agent.run(n_epochs=100, epoch_length=1000)

Here we are instantiating an environment, creating the Q-Learning algorithm and creating the agent that uses that algorithm. Next we use the .attach() function on the agent to appen all of these Controllers we have been talking about. They add logging and interleave training periods and testing periods.

If we wanted to edit any of this we just need to reimplement the piece that we’re interested in. Great!

The only issue was that the toy example didn’t work! 🤦 I’m not sure why, but it’s probably something silly. The training values looked a little weird in that the test score was always 0, and the training loss increased over time. Maybe it got stuck. Not sure. I’m sure it’s something silly.

Garage

Garage is a follow-on from rllab with the same aims, but just community, rather than individual support. The documentation is a little sparse. For instance, it doesn’t highlight that it implements a large number of algorithms. And also a huge number of policies. In fact, there’s pretty much everything you could ever need in this quiet little directory.

But it is very tightly coupled to Tensorflow, if that is a problem for you.

There’s so much functionality here, but it is completely hidden. The code is reasonably well documented, but it’s not exposed. You have to dig through it to find it.

Again there is no pip installer. Just some custom conda install and some apt-get dependencies.

So I can see that there is a huge amount of value in the algorithm implementations, but I’m going to skip the getting started this time.

Surreal

Surreal is a suite of applications. Foremost it is an RL framwork. But to make sure they don’t build just another framework, they also provide a new Robotics simulator, an orchestrator, a cloud infrastructure provisioner and a protocol for distributed computing. It comes from Stanford, hence academic in approach and use-case, hence the default use of PyTorch.

I’m all for the framework and the simulator, but it would have been easier if they had just used standard industrial components for the orchestrator (Kubernetes), infrastructure (Terraform) and protocol (Kafka/Nats/etc/etc). Those problems have already been solved. (Correction haha. Once I dug into the getting started guide, it became clear that they are using Kubernetes and Terraform. Great choices! 😂)

The robotics simulator is a collection of MuJoCo simulations. So that is a great addition to the Environment list (despite the licensing terms of MuJoCo).

The RL framework needs a big of coaxing into life. It’s another combination of apt-get’s and conda installs.

Oh wow. I’ve just noticed that they’ve disabled the Github issue tracker. And there’s an explict copyright notice that belongs to each of the authors. OK, so this isn’t even open source.

But the robosuite is MIT licenced?

Very strange. Stopping here due to the lack of issues and werid licensing.

RLgraph

So let’s start by saying RLgraph has a whopping number of commits. They’re running at 4000 commits per year. Compare that to OpenAI Gym at just 221. Somebody needs to tell these five people to have a holiday. And it’s only a year old. I can only imagine that it is being used full time.

But anyway. Like other frameworks they are focusing on scalability. But interestingly they are mapping directly to both Tensorflow and Pytorch. They are not using Keras. So that must have been a massive challenge in itself. It looks like they’ve used the Ray project to distribute work like SLM-Lab.

But hurray! They have a pip installer. The configuration of the agents is controlled via JSON. But only the configuration. Not the construction.

I’ve just read that the authors also worked on Tensorforce, which explains some of the de ja voux I have been feeling. And I love that my complaints in Tensorforce, about how the underlying DL framework often leaks into the RL implementation code, have been addressed in RLgraph. I get the feeling that they’ve been listening to me rant to my bored wife.

Separating spaces of tensors from logical composition enables us to reuse components without ever manually dealing with incompatible shapes again. Note how the above code does not contain any framework-specific notions but only defines an input dataflow from a set of spaces.

Just want I always wanted. And this is achieved with an abstraction of inputs and outputs. Other than that, the API is familiar. An Environment and an Agent. There’s a very cool Component class that abstracts the DL building blocks.

However, there are abstractions that are missing here. There’s no Policy abstractions. No exploration abstractions. Basically all the nice abstractions from Nervana Systems Coach.

But I’m still pretty impressed.

Getting Started

I altered the cartpole getting started example a little to use the SingleThreadedWorker and enable rendering on the environment to get the video output. Other than that it all looks very familiar.

import numpy as np

from rlgraph.agents import DQNAgent

from rlgraph.environments import OpenAIGymEnv

from rlgraph.execution import SingleThreadedWorker

environment = OpenAIGymEnv('CartPole-v0', monitor=".", monitor_video=1, visualize=True)

# Create from .json file or dict, see agent API for all

# possible configuration parameters.

agent = DQNAgent.from_file(

"configs/dqn_cartpole.json",

state_space=environment.state_space,

action_space=environment.action_space

)

episode_returns = []

def episode_finished_callback(episode_return, duration, timesteps, **kwargs):

episode_returns.append(episode_return)

if len(episode_returns) % 10 == 0:

print("Episode {} finished: reward={:.2f}, average reward={:.2f}.".format(

len(episode_returns), episode_return, np.mean(episode_returns[-10:])

))

worker = SingleThreadedWorker(env_spec=lambda: environment, agent=agent, render=True, worker_executes_preprocessing=False,

episode_finish_callback=episode_finished_callback)

print("Starting workload, this will take some time for the agents to build.")

# Use exploration is true for training, false for evaluation.

worker.execute_timesteps(1000, use_exploration=True)

Simple RL

And finally, simple_rl. All other frameworks state that their goals are performance/scalability or modularity or repeatability. None of them set out to be simple. This is where simple_rl steps in. Built from the ground up to be as simple as possible. It only has two dependencies, numpy and matplotlib. And that’s only if you want to plot the results. Basically it’s just numpy. It has pip installer. The documentation is non-existant but that’s ok, who needs docs? ;-)

It presents a familiar collection of abstractions: an agent, an experiment, an environment that is called an mdp. The framework also abstracts other parts of the model like an action, feature, state. And a planning class that implements the strategies for next actions. It is still very modular, but some of the naming convensions should be changed to match the other frameworks (standardisation).

So it is becoming apparent that “simple” doesn’t necesarily mean easy to understand. Generally, more abstraction makes it harder to understand. Simple in this case is “ease of use”. I think that’s a shame. I really was hoping for simplicity in terms of undertanding. But it looks like it is aiming to compete with some of the more complex frameworks; Deep RL support with PyTorch is in development.

There continues to be a gap in the framework market for a very simple, understandable RL framework. And I’m not sure why this framework has so few stars compared to the rest. Presumably because it’s not bootstrapping on the popularity of other DL frameworks, like many of the other frameworks.

Getting Started

It should have been simple. But deep inside the code there are a few lines that force the Matplotlib to use the TkAgg backend. I tried to get TkAgg working in the Notebook but could not. It is designed for graphical desktop use, so you can imagine that it is not straightforward. I created an issues here. It should be a simple fix.

If/When it works, it should be as simple as something like:

from simple_rl.agents import QLearningAgent, RandomAgent, RMaxAgent

from simple_rl.tasks import GridWorldMDP

from simple_rl.run_experiments import run_agents_on_mdp

# Setup MDP.

mdp = GridWorldMDP(width=4, height=3, init_loc=(1, 1), goal_locs=[(4, 3)], lava_locs=[(4, 2)], gamma=0.95, walls=[(2, 2)], slip_prob=0.05)

# Setup Agents.

ql_agent = QLearningAgent(actions=mdp.get_actions())

rmax_agent = RMaxAgent(actions=mdp.get_actions())

rand_agent = RandomAgent(actions=mdp.get_actions())

# Run experiment and make plot.

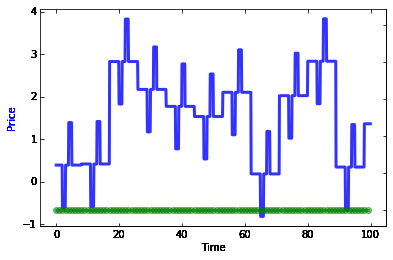

run_agents_on_mdp([ql_agent, rmax_agent, rand_agent], mdp, instances=5, episodes=50, steps=10)

This trains a few different agents and produces a reward plot for each. Nice eh! The only thing I would suggest is that there shouldn’t be any environment implementation in simple_rl. That is out of scope. Leave that to Gym-like projects. For example, gym-minigrid has an awesome Gridworld implementation.

- : 652

Google Rankings

Google’s Trends search tool allows you to find out what search queries are the most popular. Unfortunately they only provide relative measures and those change depending on what you are querying. Also, common words often get mixed into other queries. For example, searching for “Facebook Horizon” is mixed with a bunch of unrelated queries about “Forza Horizon 4” and “facebook log in”; clearly this inflates this score and cannot be trusted.

I went through all of these frameworks and found that only two frameworks stood out, openai gym and google dopamine. But even for google dopamine, the related queries were google docs/scholar/translate/etc., so I’m not sure if I can trust this either.

One of the things that stood out to me most was the geographical popularity. OpenAI Gym seemed to be the most popular search term, given that it has a high ranking score and all of the related queries are related to RL. But when you look at the how the ranking alters by geography, China is the country with the most searches.

This strikes me as odd, because Google is banned in China and so how are they generating these statistics? Are users using VPNs and then searching, and Google is able to recognise that the original traffic is from China?

Don’t Trust Google Trends

All of this brings me to the conclusion that I can’t trust Google trends at all. OpenAI Gym does seem like the highest ranking RL related framework, which you might expect, but the bulk of that score is coming from China. But Google is blocked in China. Sooo….. 🤷

If you are evaluating RL frameworks for a business application and would like guidance on selecting the right tools for your use case, our reinforcement learning consulting team has extensive experience helping organizations navigate these choices.